Choosing the right engineering management platform is more critical than ever in 2026. This guide reviews the best alternatives to Jellyfish for engineering leaders, managers, and decision-makers who are seeking to optimize team performance, align engineering with business goals, and adapt to the rapidly evolving landscape of AI, developer experience, and workflow automation. With the increasing importance of actionable insights, real-time data, and seamless integration, selecting the right platform can directly impact your organization’s ability to deliver value, improve productivity, and stay competitive.

Jellyfish built its reputation as the pioneer engineering management platform. Since launching in 2017, the company has raised $114.5 million in funding from Accel, Insight Partners, and Tiger Global. Its core strength is aligning engineering work with business objectives, giving CFOs and VPs of Engineering a shared language around investment allocation, resource planning, engineering effort, and resource allocation—key aspects tracked by such platforms for effective capacity planning and workload management.

That positioning served enterprise buyers well for years. But the engineering intelligence category has shifted. AI coding tools are now standard across most teams. The 2025 DORA Report introduced new measurement frameworks. Developer experience has become a board-level priority. Today, aligning engineering activities with business goals and supporting business teams with actionable insights is critical for organizations seeking to optimize outcomes.

Platforms must now provide seamless integration with existing tools to enable real-time data aggregation, supporting new measurement frameworks and the growing focus on developer experience.

The question is no longer “Where is my engineering time going?” It is “How do I measure the real impact of AI tools on delivery, code quality, and developer experience, all at once?” Making data-driven decisions is now essential for evaluating the impact of engineering investments and driving continuous improvement.

If you are evaluating Jellyfish alternatives, you are likely dealing with one or more of these friction points.

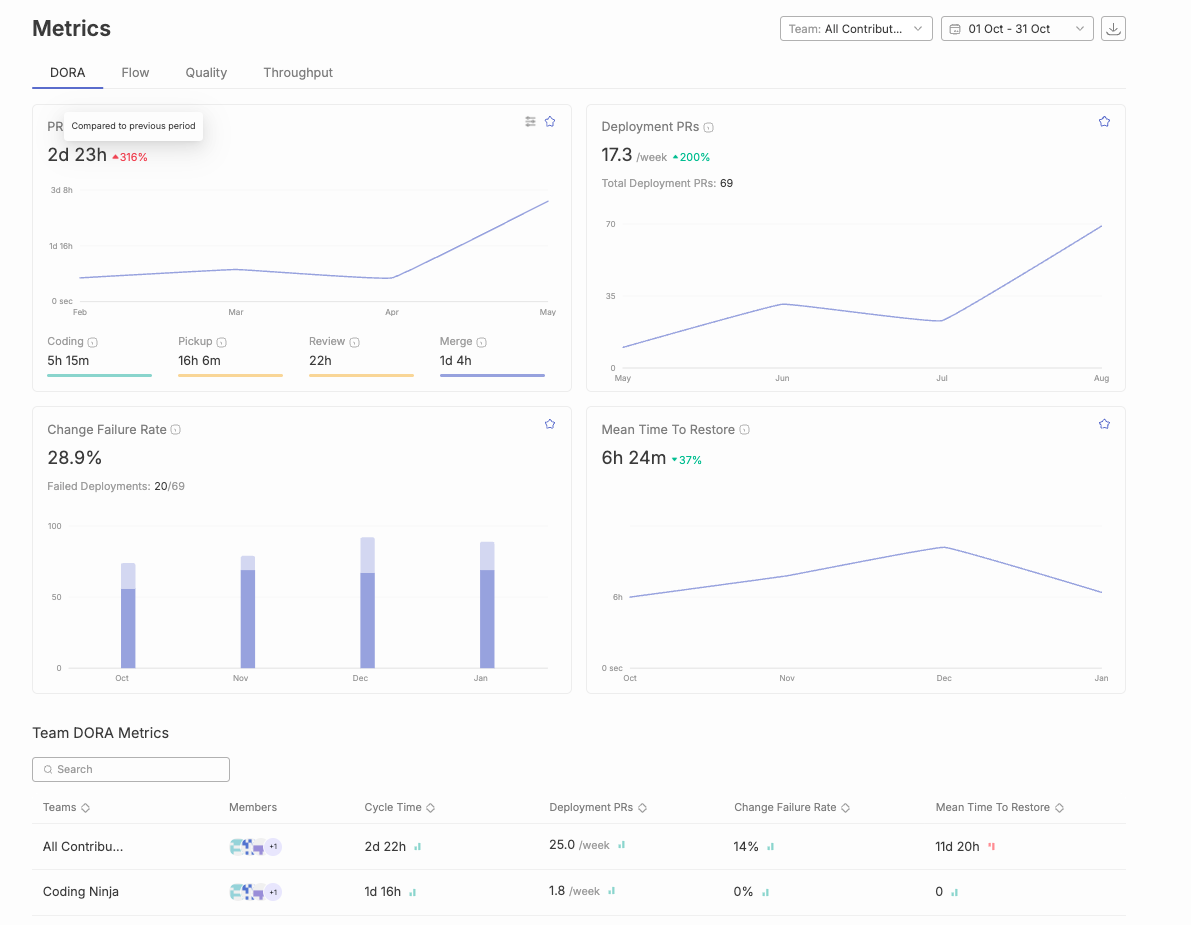

Jellyfish is a capable platform for enterprise engineering management. It does several things well: investment allocation, capacity planning, R&D cost reporting, and executive dashboards. For organizations with deep Jira workflows and clean data hygiene, it provides solid visibility into where engineering time goes. Jellyfish focuses on quantitative metrics and tracking key metrics, offering dashboards and reports that help monitor team performance, but it may lack qualitative and tailored insights that provide a more complete understanding of engineering productivity.

But several patterns consistently push teams toward alternatives.

Pricing requires a sales conversation. Jellyfish does not publish pricing publicly. According to Vendr’s 2026 analysis, buyers with 50–150 engineering seats on annual contracts often see pricing in the range of $50,000–$120,000 per year. For mid-market teams with 50–200 engineers, that is a significant commitment before you have even seen the platform in action.

Setup and onboarding take time. Multiple competitor analyses and user reviews on G2 note that Jellyfish has a steep learning curve. Users report that training is vital to use the product well, and initial configuration can take weeks. Several G2 reviewers cite complex setup and the need for dedicated staff to manage the platform.

Heavy Jira dependency. Jellyfish treats Jira as its primary system of record. For teams using Linear, GitHub Issues, or hybrid setups, this Jira-centricity can become a constraint. As Faros AI’s analysis points out, Jellyfish’s approach can undercount engineering activities not tied to Jira issues. When only quantitative data is considered, technical debt and workflow bottlenecks can be overlooked, impacting long-term engineering efficiency.

Limited AI coding impact measurement until recently. Jellyfish launched AI Impact tracking in late 2024 and has expanded it since. However, many teams evaluating alternatives report needing deeper AI measurement: not just adoption tracking (who is using Copilot or Cursor), but actual impact on cycle time, code quality, and PR outcomes across AI-assisted versus non-AI PRs.

No automated code review. Jellyfish does not include a code review agent. If you want AI-powered code review alongside your engineering analytics, you need a separate tool or a platform that bundles both.

Customization gaps. G2 reviews consistently flag limited customization as a friction point. Of the top complaint themes, 21 mentions specifically call out lack of custom reporting flexibility, and 19 mentions note limited features and integration depth. Teams increasingly need comprehensive code insights and tailored insights to better understand team performance and address unique workflow challenges.

Many software teams struggle with the limitations of purely quantitative data, realizing it doesn't tell them how to improve or what's happening outside of platforms like Git and Jira, which can hinder actionable progress and slow development speed.

Before comparing specific platforms, it helps to know what separates a useful engineering intelligence tool from one that creates more dashboard fatigue. Here is what matters in 2026. Seamless integration with existing tools and customizable dashboards is essential for capturing real time data, enabling actionable insights, and supporting better decision-making across engineering teams.

Effective AI-powered workflow optimization requires tools that provide clear, actionable insights to highlight bottlenecks in the development process and offer specific recommendations for data-driven improvements. Seamless integration with existing tools is crucial for automatic data capture and improved decision-making.

Alternatives to Jellyfish, such as DX, LinearB, Swarmia, Haystack, Waydev, and Pluralsight Flow, address the main limitations of Jellyfish by offering a more comprehensive approach to engineering management. These platforms combine both qualitative and quantitative insights, allowing teams to track performance and identify bottlenecks more effectively. Platforms like Swarmia and Pluralsight Flow provide engineering teams with tools that focus on team dynamics and workflow optimization, which can be more beneficial than Jellyfish's top-down reporting approach. Jellyfish is often criticized for its limited customization and lack of focus on developer experience, which has led many teams to seek alternatives that offer better insights into daily challenges faced by developers. Additionally, alternatives like Haystack and Waydev emphasize real-time insights and proactive identification of bottlenecks, enhancing team productivity compared to Jellyfish's more rigid reporting structure.

When evaluating alternatives to Jellyfish, it's important to consider how some platforms position themselves as engineering effectiveness platforms—offering not just analytics, but comprehensive solutions for operational efficiency, code quality, and developer productivity. The table below compares seven alternatives across the capabilities that matter most for engineering leaders in 2026.

Notably, alternatives to Jellyfish such as DX and Typo AI combine both qualitative and quantitative insights, enabling teams to track performance and identify bottlenecks more effectively than platforms focused solely on high-level metrics.

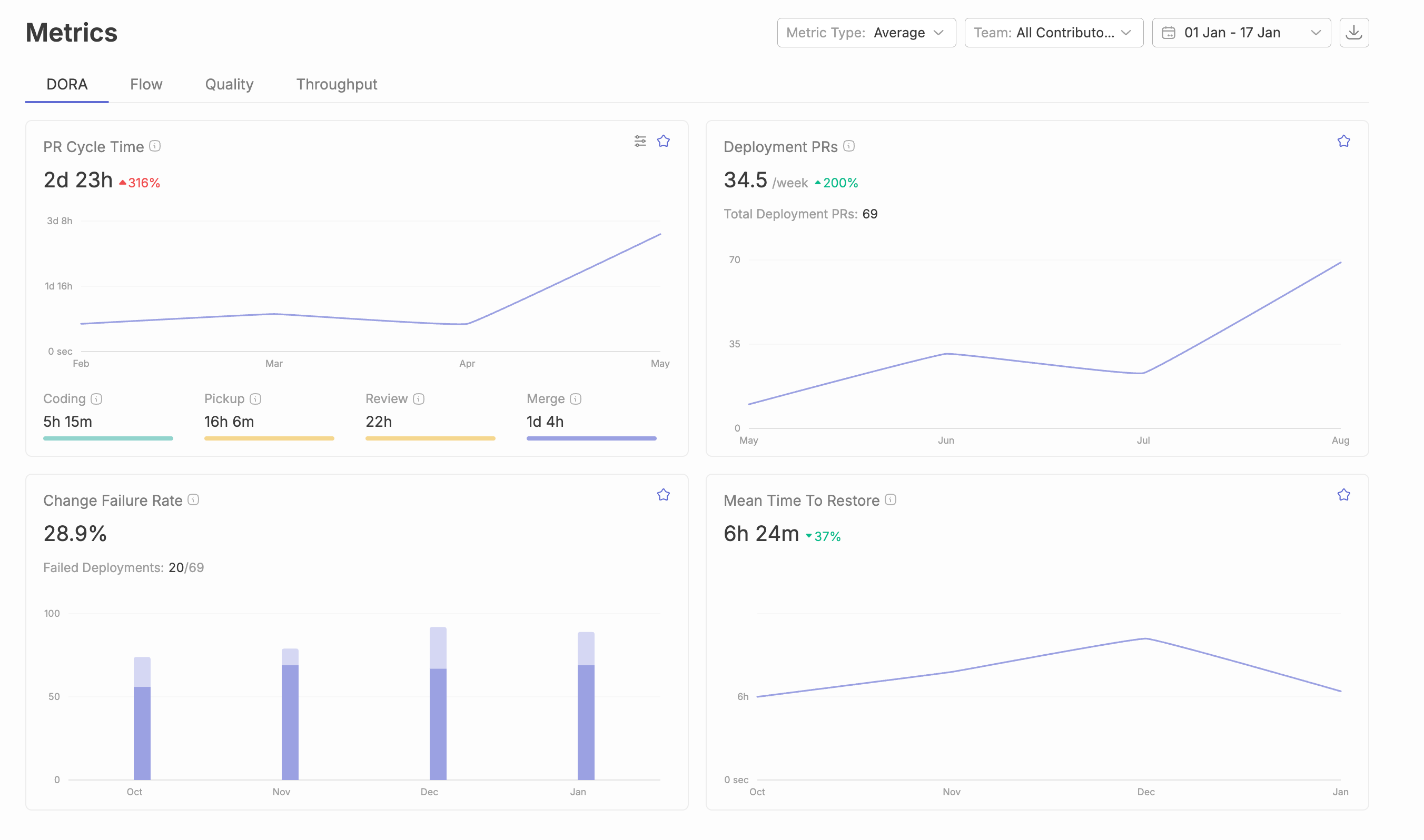

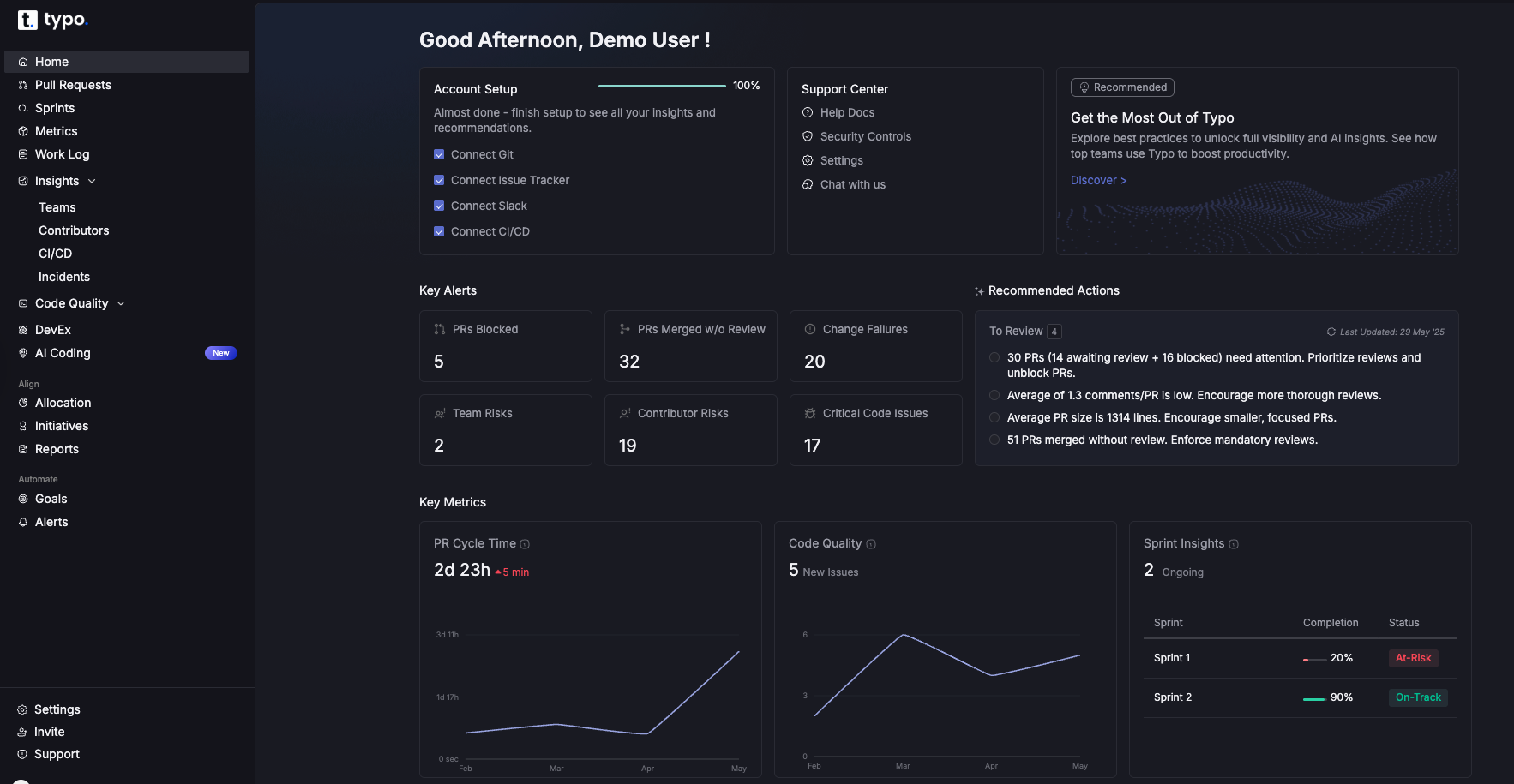

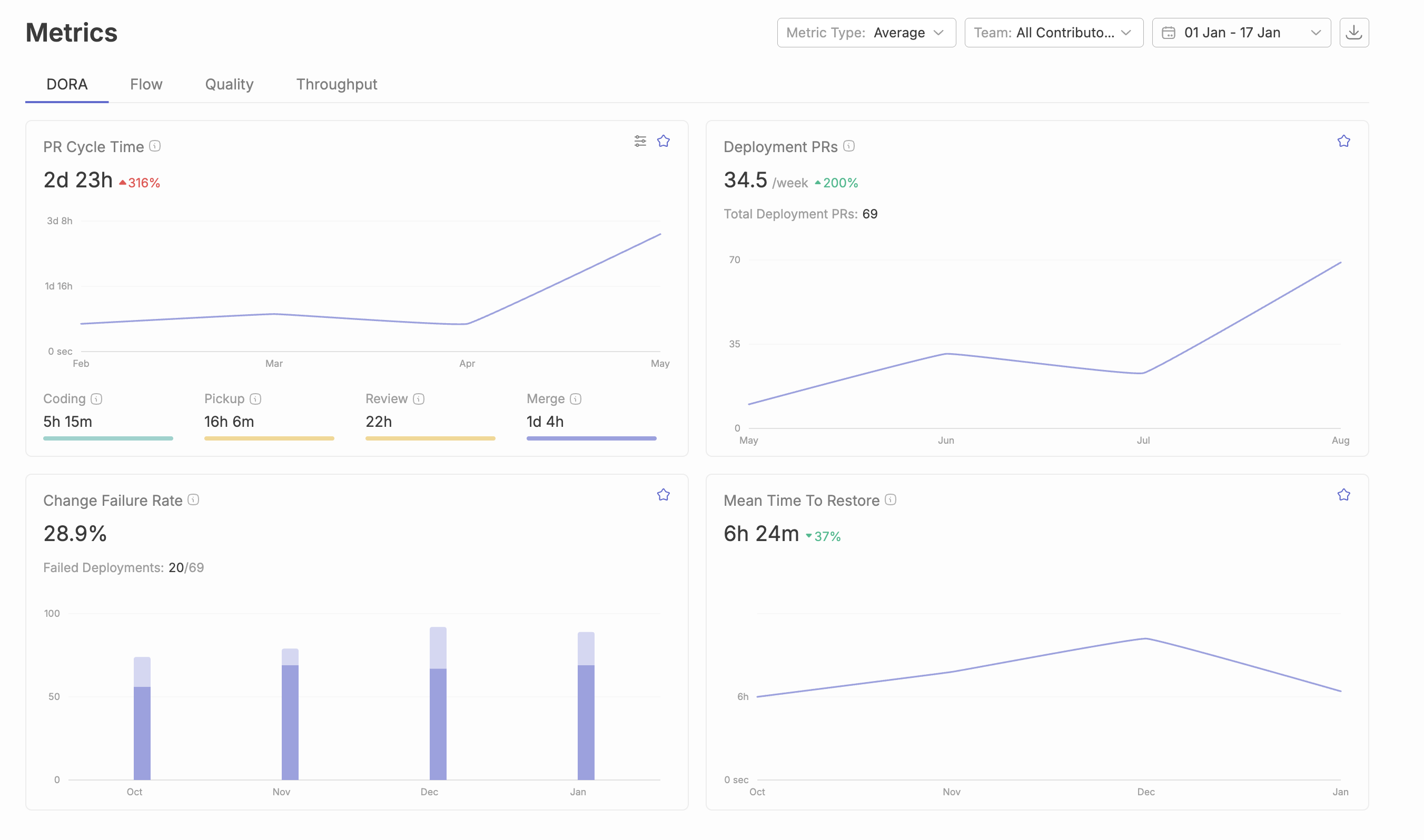

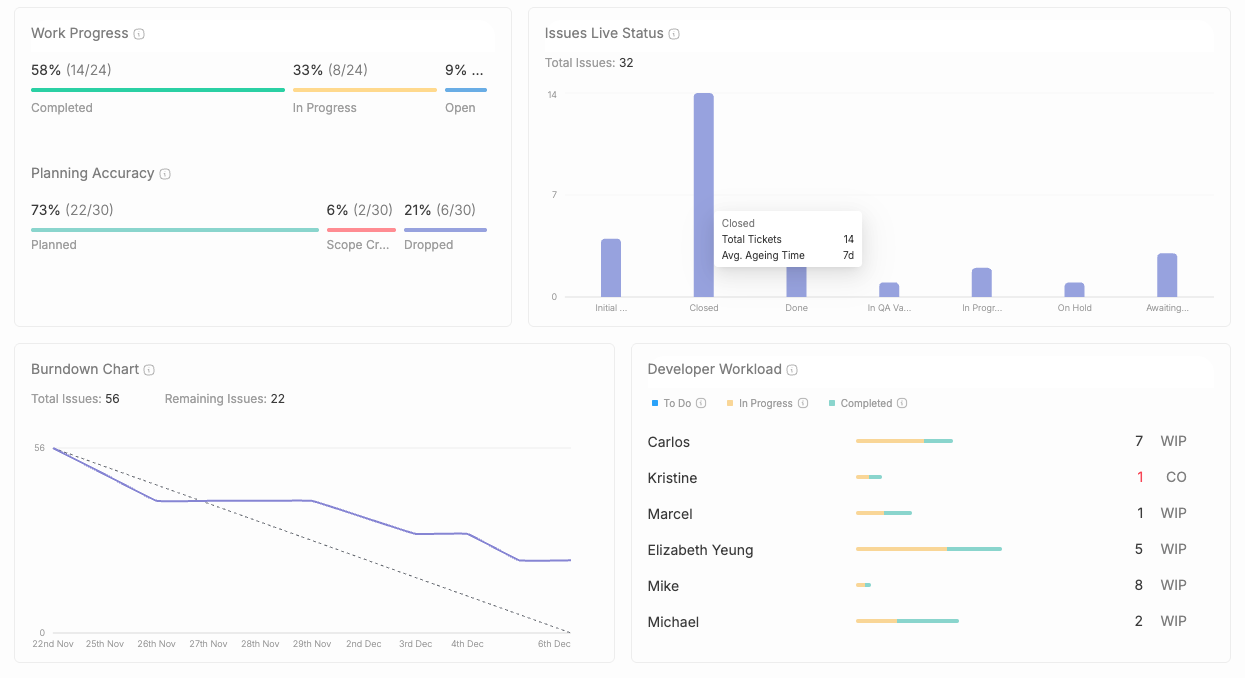

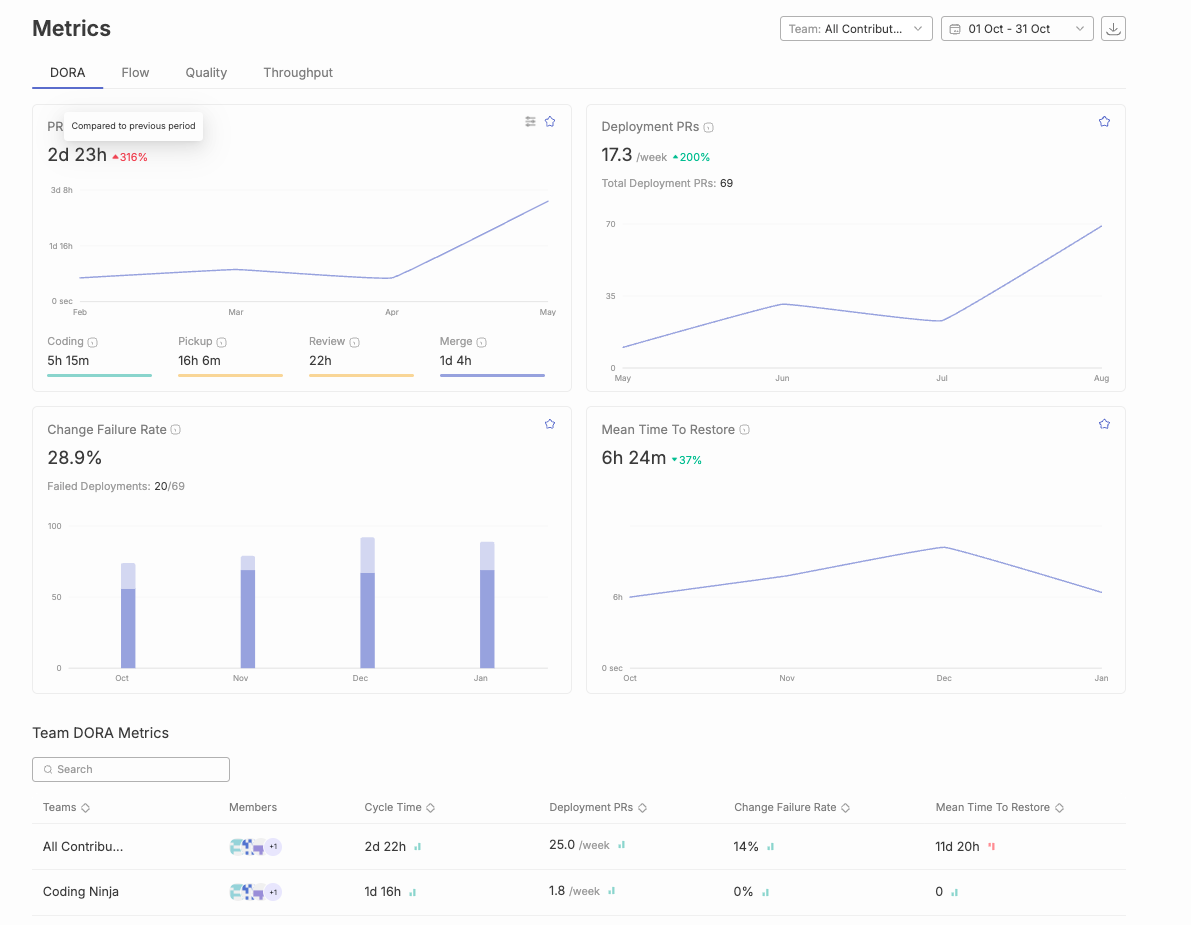

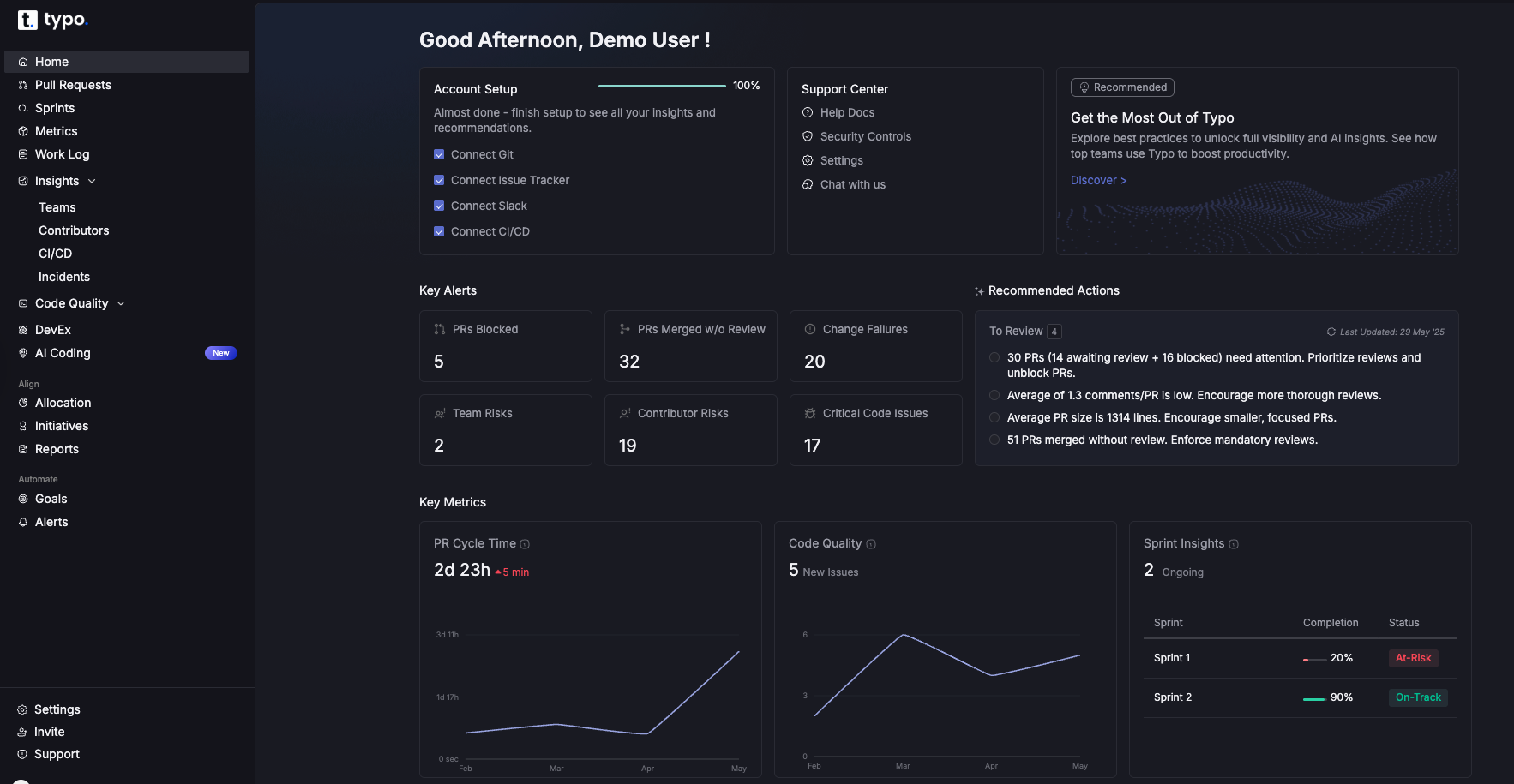

Typo AI is an engineering effectiveness platform that combines SDLC visibility, AI coding tool impact measurement, automated AI code reviews, and developer experience surveys in a single product. It provides comprehensive code insights and tracks DORA and SPACE metrics to help teams optimize productivity, software health, and operational efficiency. Typo connects to GitHub, GitLab, Bitbucket, Jira, Linear, and CI/CD pipelines.

Where Typo differs from Jellyfish is scope and speed. Jellyfish focuses primarily on engineering-to-business alignment, investment allocation, and financial reporting. Typo starts from how work actually moves through the SDLC and layers in AI impact, code quality, and developer experience on top of that foundation, reflecting its broader mission to redefine engineering intelligence.

Key strengths:

Customer proof points:

Typo is a G2 Leader with 150+ reviews, trusted by 1,000+ engineering teams, and featured in Gartner’s Market Guide for Software Engineering Intelligence Platforms.

Best for: Engineering teams at mid-market SaaS companies (50–500 engineers) who need unified visibility across delivery, AI impact, code quality, and developer experience, without the enterprise pricing or multi-week onboarding—and who are evaluating why companies choose Typo for this use case.

LinearB focuses on engineering workflow automation, DORA metrics, and cycle time analytics. As an engineering analytics tool, it provides instant insights and workflow automation, delivering automated improvement actions like PR bots and alerts that help teams enforce working agreements around PR size, review turnaround, and merge frequency.

Where LinearB stands out: It is actionable at the team level. Instead of high-level allocation reporting, LinearB provides specific bottleneck identification, tracks key engineering metrics, and offers automated fixes. The free tier is generous for small teams, making it an accessible starting point.

Where it falls short versus Jellyfish alternatives: LinearB does not include native AI coding impact measurement. It does not offer automated code review. And it lacks DevEx survey capabilities. Teams who need to measure how AI tools affect delivery or who want code review bundled into their analytics platform will need to look elsewhere.

Best for: Engineering managers focused on process efficiency who want automated interventions in their development pipeline, particularly those starting with a free-tier budget. Alternatives like Haystack and Waydev emphasize real-time insights and proactive identification of bottlenecks, enhancing team productivity.

Swarmia combines DORA metrics with developer experience signals. It tracks cycle time, deployment frequency, and review throughput alongside “working agreements” that let teams set norms like PR size limits and review turnaround expectations. Swarmia helps development teams and software development teams track quality metrics and improve team's productivity by providing actionable insights and real-time analytics.

Where Swarmia stands out: Clean UX. Team-first approach. Positions itself explicitly as the “humane alternative to engineering surveillance,” which resonates with engineering leaders who care about developer experience as much as process metrics. The company raised €10 million in June 2025, signaling continued growth.

Where it falls short: No automated code review. Limited AI coding impact measurement. Limited customization for complex enterprise needs. G2 and Reddit discussions consistently cite feature depth as the primary gap compared to more comprehensive platforms.

Best for: Teams starting their metrics journey who want clean dashboards and team-first norms without enterprise complexity.

DX (formerly GetDX) is an engineering intelligence platform founded by the researchers who created the DORA, SPACE, and DevEx frameworks. It combines structured developer surveys with system metrics through its Data Cloud product to measure developer experience, productivity friction, and organizational health, while also measuring developer productivity and individual performance metrics. DX incorporates developer feedback as a qualitative element, ensuring that both quantitative data and direct input from developers are used to identify issues and suggest improvements.

Where DX stands out: Deep research pedigree. The DX Core 4 framework is becoming a standard reference in the DevEx space. No other tool has the same academic backing for its measurement methodology. DX leverages the SPACE framework (Satisfaction, Performance, Activity, Communication, Efficiency) to provide a holistic view of developer experience and productivity.

Where it falls short: DX is primarily a survey and sentiment platform. It incorporates self reported metrics from developers, which complements system-generated data, but does not include automated code review, native AI coding impact measurement, or deep SDLC analytics. If you need to see how work moves through your pipeline, diagnose PR bottlenecks, or track AI tool impact on cycle time, DX will not cover those use cases alone.

Best for: Organizations investing heavily in developer experience improvement and platform engineering who want the most rigorous DevEx measurement methodology available.

Haystack is a lightweight engineering analytics platform focused on DORA metrics, delivery visibility, and team health. It targets teams that want engineering metrics without the complexity of enterprise platforms, while providing real time data and real time visibility for distributed teams and software teams.

Where Haystack stands out: Quick setup, transparent pricing, and a focused feature set that does not overwhelm smaller teams. For engineering managers overseeing 5–50 developers who need basic delivery visibility, Haystack provides fast time-to-value.

Where it falls short: No AI coding impact measurement. No automated code review. No DevEx surveys. As teams scale past 50–100 engineers or need to measure AI tool ROI, Haystack’s feature set may not keep pace.

Best for: Small-to-mid engineering teams (under 50 developers) who want straightforward delivery metrics without enterprise complexity or pricing.

Waydev is an engineering intelligence platform that uses DORA metrics, the SPACE framework, developer experience insights, and AI capabilities to provide delivery analytics. It automatically tracks work from Git activity, visualizes project timelines, and includes basic code review workflow features, while also offering detailed analytics on pull requests and the entire development lifecycle.

Where Waydev stands out: Automated work logs from Git activity eliminate manual entry. Project timeline visualization gives clear progress views. Sprint planning integration supports agile workflows. Waydev also supports the software delivery process by providing engineering insights that help teams optimize performance and identify bottlenecks.

Where it falls short: AI coding impact measurement is limited. Code review capabilities are basic compared to dedicated AI code review tools. DevEx survey depth does not match platforms like DX or Typo.

Best for: Teams that want Git-level activity analytics with automated work tracking and sprint planning support.

Pluralsight Flow (formerly GitPrime) tracks coding activity: commits, lines of code, code churn, and review patterns. It was acquired by Appfire from Pluralsight in February 2025 and now operates as a standalone product within the Appfire ecosystem alongside BigPicture PPM and 7pace Timetracker. Flow also enables tracking of individual performance and individual performance metrics, providing visibility into productivity metrics and key metrics for both teams and developers—similar to platforms like Code Climate Velocity.

Where Flow stands out: Mature Git activity analytics with ML-powered insights. The Appfire ecosystem positions it alongside project management tools. For organizations already invested in Pluralsight for developer training, Flow provides natural synergy.

Where it falls short: No AI coding impact measurement. No automated code review. No DevEx surveys. No manager productivity agents. The platform focuses on Git-level patterns rather than full SDLC visibility, and it does not cover sprint analytics, deployment metrics, or incident tracking.

Best for: Large organizations that want mature Git analytics and are already invested in the Appfire or Pluralsight ecosystem.

The right platform depends on what gap Jellyfish is not filling for your team. Here is a framework for making the decision. For engineering organizations, leveraging data-driven approaches and data-driven insights is essential—these enable leaders to make informed decisions, optimize workflows, and align engineering efforts with strategic goals.

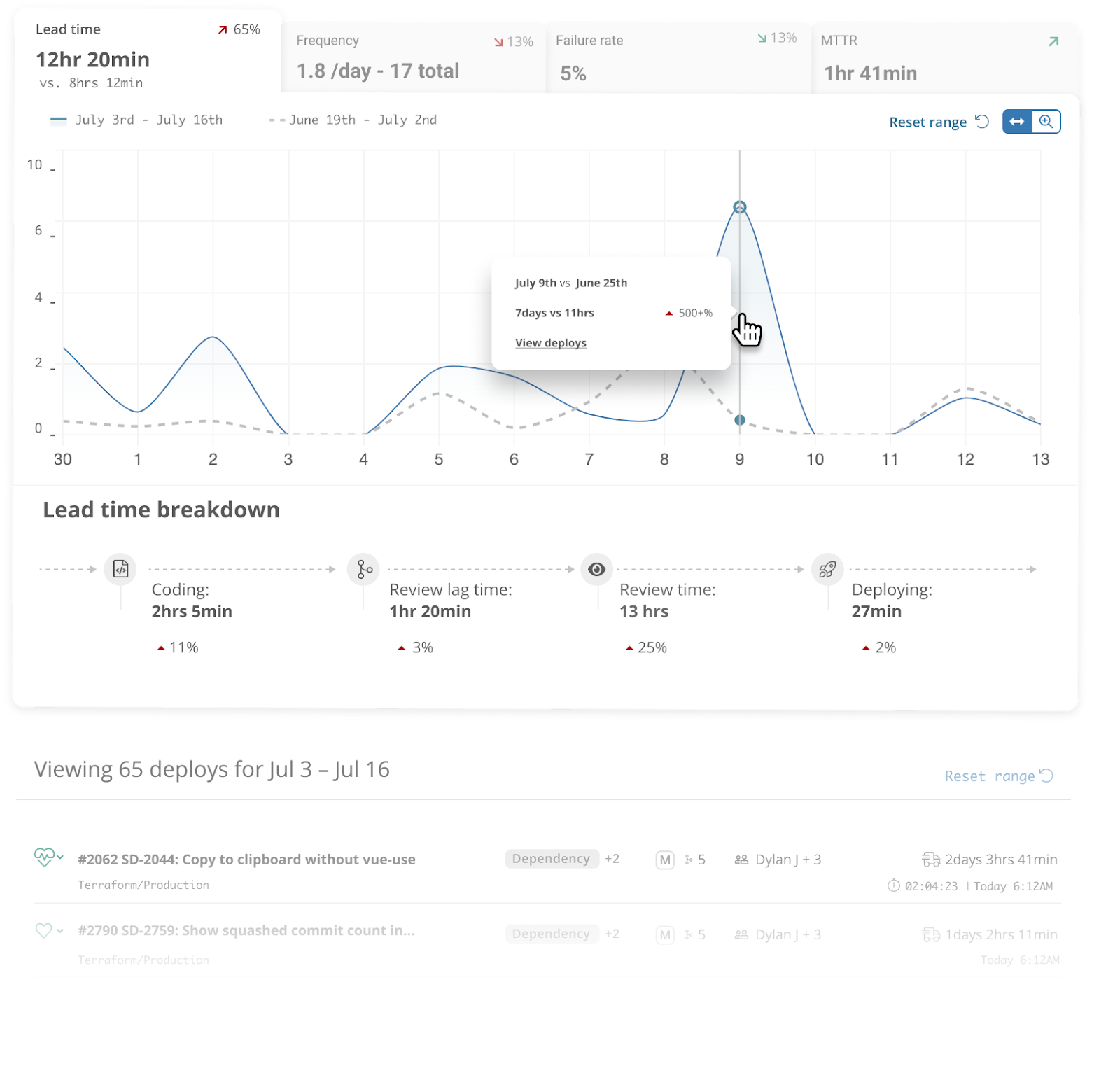

If your primary need is measuring AI coding tool impact: Typo is the strongest option for improving developer productivity with AI intelligence. It natively tracks GitHub Copilot, Cursor, Claude Code, and CodeWhisperer, and compares AI-assisted versus non-AI PR outcomes on cycle time, quality, and developer experience. Jellyfish added AI Impact tracking recently, but Typo’s approach measures verified impact, not just adoption.

If you need automated code review bundled with analytics: Typo is the only platform on this list that includes a context-aware AI code review agent alongside SDLC analytics, AI impact measurement, and DevEx surveys. Every other alternative requires a separate code review tool. Typo also supports engineering productivity and team efficiency by surfacing actionable metrics and workflow bottlenecks.

If your primary need is developer experience measurement: DX offers the deepest research-backed methodology. Typo offers DevEx surveys combined with delivery analytics and AI impact in one platform. The tradeoff is depth of DevEx research (DX) versus breadth of the platform (Typo).

If budget is your primary constraint: LinearB’s free tier or Swarmia’s transparent pricing provide accessible starting points. Typo also offers flexible plans and a self-serve free trial with no sales call required.

If you need enterprise finance alignment: Jellyfish may still be the right choice. Its investment allocation, R&D capitalization, and DevFinOps features are designed for CFO-level conversations. Jellyfish stands out for tracking engineering investments and aligning them with business outcomes. No alternative on this list matches Jellyfish’s depth in financial engineering reporting.

The 2025 DORA Report found that 90% of developers now use AI coding tools. But the report also found that AI amplifies existing practices rather than fixing broken ones. Teams with poor DORA baselines do not improve with AI. They accelerate their dysfunction.

This creates a measurement problem. Most organizations track AI tool adoption through license counts. They know how many seats are active. They do not know whether those tools are actually improving delivery speed, code quality, or developer experience. Tracking engineering effort and resource allocation is essential for understanding the true impact of AI tools, as it reveals how team resources are distributed and whether productivity gains are realized.

That gap is why AI coding impact measurement has become the defining capability in the engineering intelligence category. It is not enough to know that 80% of your team uses Copilot. You need to know whether AI-assisted PRs merge faster, introduce more rework, or create code quality issues that show up downstream—while also optimizing the development process and engineering processes for improved developer productivity and addressing technical debt.

Platforms that can answer that question, with verified data from your actual engineering workflow, are the ones worth evaluating.

Jellyfish built a strong foundation in the engineering management space. For enterprise teams that need deep investment allocation, R&D capitalization, and finance alignment, it remains a capable option.

But the category has evolved. AI coding tools have changed what engineering leaders need to measure. Developer experience has become a board-level priority. The importance of software delivery, team collaboration, and operational efficiency has grown as organizations seek platforms that optimize the entire development lifecycle. And the bar for setup speed and pricing transparency has risen.

If you are looking for a platform that covers SDLC visibility, AI coding impact measurement, automated code reviews, and developer experience in a single product, with a setup that takes 60 seconds instead of 60 days, Typo is worth evaluating.

What is Jellyfish used for?

Jellyfish is an engineering management platform that aligns engineering work with business objectives. It provides visibility into investment allocation, resource planning, R&D capitalization, and delivery metrics. It integrates with Jira, GitHub, GitLab, and other development tools.

How much does Jellyfish cost?

Jellyfish does not publish pricing publicly. Based on Vendr’s 2026 market data, annual contracts for 50–150 engineering seats typically range from $50,000 to $120,000, depending on modules, integrations, and contract terms.

What are the main limitations of Jellyfish?

Common friction points reported by users include: steep learning curve and complex initial setup, heavy dependency on Jira data quality, no automated AI code review capability, limited custom reporting flexibility, lack of customizable dashboards, limited tracking of quality metrics, and opaque pricing that requires a sales conversation.

Does Jellyfish measure AI coding tool impact?

Jellyfish added AI Impact tracking in late 2024, which measures AI tool adoption and usage across coding assistants like GitHub Copilot and Cursor. However, other engineering analytics tools provide more granular analysis of pull requests and quality metrics, enabling deeper AI-vs-non-AI PR comparison at the delivery impact level. Platforms like Typo provide more granular measurement.

What is the best Jellyfish alternative for mid-market teams?

For mid-market engineering teams (50–500 engineers) that need unified SDLC visibility, AI coding impact measurement, automated code review, and DevEx surveys in a single platform, Typo offers the most comprehensive coverage with the fastest setup (60 seconds) and self-serve pricing.

GitHub Copilot, Cursor, and Claude Code represent the three dominant paradigms in AI coding tools for 2026, each addressing fundamentally different engineering workflow needs. With 85% of developers now using AI tools regularly and engineering leaders actively comparing options in ChatGPT and Claude conversations, choosing the right ai coding assistant has become a strategic decision with measurable impact on delivery speed and code quality.

This guide covers performance benchmarks, pricing analysis, enterprise readiness, and measurable productivity impact specifically for engineering teams of 20-500 developers. It falls outside our scope to address hobbyist use cases or tools beyond these three leaders. The target audience is engineering managers, VPs of Engineering, and technical leads who need data-driven comparisons rather than developer preference debates.

The direct answer: GitHub Copilot excels at IDE integration and enterprise governance with 20M+ users and Fortune 100 adoption. Cursor leads in flow state maintenance and multi file editing for small-to-medium tasks. Claude Code dominates complex reasoning and architecture changes with its 1M token context window and 80.8% SWE-bench score.

By the end of this comparison, you will:

While these three tools boost individual productivity, measuring their actual impact on delivery speed and code quality requires dedicated engineering intelligence platforms that track AI-influenced outcomes across your entire codebase.

The 2026 landscape of ai coding tools has crystallized into three distinct approaches: IDE-integrated completion tools that augment familiar interfaces, AI-native editing environments that reimagine the development workflow entirely, and terminal-based autonomous agents that execute complex tasks independently. Understanding these categories is essential because each addresses different engineering bottlenecks.

IDE-integrated tools like GitHub Copilot work within your existing development environment. GitHub Copilot is an extension that works across multiple IDEs, providing the only tool among the three that supports a wide range of editors without requiring a switch. Developers keep their familiar interface, existing extensions, and muscle memory while gaining inline suggestions and chat capabilities. This approach minimizes change management friction and enables gradual adoption across teams using VS Code, JetBrains, or Neovim.

Standalone solutions like Cursor require switching development environments entirely. Cursor is a standalone IDE built as a VS Code fork with AI integrated into every workflow, making it a complete editor redesigned around AI-assisted development. As a vs code fork, Cursor maintains familiarity but demands that teams switch editors and migrate configurations. This tradeoff delivers deeper AI integration at the cost of adoption friction. Enterprise teams often find IDE-integrated approaches easier to roll out, while power users willing to embrace change may prefer the cohesion of AI-native environments.

Code completion tools focus on high-frequency, low-friction suggestions. You write code, and the ai generated code appears inline, accepted with a single keystroke. This approach optimizes for flow state and immediate productivity on the current file.

Autonomous coding through agent mode takes a fundamentally different approach. You describe a task in natural language descriptions, and the terminal agent executes multi step tasks across multiple files, potentially generating entire features or refactoring existing codebases. Claude Code is a terminal-based AI coding agent that autonomously writes, refactors, debugs, and deploys code, providing a unique approach compared to IDE-integrated tools. Claude Code leads this category, achieving higher solve rates on complex problems but requiring developers to adapt to conversational coding workflows.

The choice between approaches depends on your primary bottleneck. If developers spend most time on incremental coding, autocomplete delivers immediate time saved. If architectural changes, debugging intermittent issues, or navigating very large codebases consume significant cycles, autonomous agents provide greater leverage.

Building on these foundational distinctions, each tool demonstrates specific capabilities and measurable impact that matter for engineering teams evaluating options.

GitHub Copilot serves over 20 million developers and has become the Fortune 100 standard for ai assisted development. Its deep integration with the github ecosystem provides seamless workflow integration from code completion through pull request review.

Core strengths: Cross-IDE support spans visual studio, VS Code, JetBrains, Neovim, and CLI tools. Enterprise compliance features include SOC 2 certification, IP indemnification, and organizational policy controls. The Business tier ($19/user/month) provides admin controls and 300 premium requests monthly; Enterprise ($39/user/month) adds repository indexing, custom fine-tuned models (beta), and 1,000 premium requests.

Measurable impact: Best for enterprise teams needing consistent autocomplete across diverse development environments. Studies show inline suggestion acceptance rates of 35-40% without further editing. Agent mode and code review features enable multi file changes, though not as autonomously as Claude Code.

Key limitations: The context window presents the most significant constraint. While GPT-5.4 theoretically supports ~400,000 tokens, users report practical limits around 128-200K tokens with early summarization. For complex tasks spanning multiple files or requiring deep understanding of existing codebase, this limitation affects output quality.

Cursor positions itself as the ai coding tool for developers who want AI woven into every aspect of their workflow. Cursor is a standalone IDE built as a VS Code fork with AI integrated into every workflow, making it a complete editor redesigned around AI-assisted development. As a standalone ide based on a code fork of VS Code, it attracts over 1 million users seeking deeper integration than plugin-based approaches.

Core strengths: Composer mode enables multi file editing with context awareness across your entire project. Background cloud agents handle complex refactoring while you work on other tasks. Supermaven autocomplete achieves approximately 72% acceptance rates in benchmarks, significantly higher than alternatives for simple completions.

Measurable impact: Cursor completes SWE-bench tasks approximately 30% faster than Copilot for small-to-medium complexity work. First-pass correctness reaches ~73% overall, with ~42-45% of inline suggestions accepted without further editing. The tool excels at maintaining flow state, staying out of the way until needed.

Key limitations: Requires teams to switch editors, creating adoption friction. Token-based pricing through cursor pro can become unpredictable for heavy usage limits. On hard tasks, correctness drops to ~54% compared to Claude Code’s ~68%. The underlying model determines actual capabilities, making performance variable depending on configuration.

Claude Code operates as a terminal agent optimized for autonomous coding on complex tasks. Claude Code is a terminal-based AI coding agent that autonomously writes, refactors, debugs, and deploys code, providing a unique approach compared to IDE-integrated tools. Its 200K standard context window (up to 1M in enterprise/beta tiers) enables reasoning across entire codebases that would overwhelm other tools.

Core strengths: The largest context window available enables architectural changes, legacy system navigation, and debugging intermittent issues that require understanding thousands of files simultaneously. Agent teams enable parallel workflows. The 80.8% SWE-bench Verified score demonstrates superior performance on complex problems. VS Code and JetBrains extensions add claude code to existing workflows for those who prefer IDE integration.

Measurable impact: Claude code leads on first-pass correctness at ~78% overall, reaching ~68% on hard tasks versus Cursor’s ~54%. Pull request acceptance rates show 92.3% for documentation tasks and 72.6% for new features. Complex refactoring executes approximately 18% faster than Cursor.

Key limitations: Terminal-only primary interface requires learning curve for developers accustomed to IDE-centric workflows. Usage based pricing for extended context can become expensive for teams regularly using 1M-token sessions. Performance degrades around 147-150K tokens before auto-compaction triggers, requiring prompt engineering to manage context effectively.

Interpreting benchmark data requires understanding that synthetic benchmarks don’t directly translate to productivity gains in your specific codebase and workflow patterns.

SWE-bench Verified measures complex correctness on real-world code tasks. Claude Code (Opus 4.5) achieves ~80.9%, Cursor ~48%, and Copilot ~55% in comparable benchmark sets. These differences become more pronounced on hard tasks requiring multi step problems across multiple files.

HumanEval and MBPP test function-level code generation. Claude Opus 4.6 reaches ~65.4% on Terminal-Bench 2.0; Cursor’s newer Composer variants achieve ~61-62%. These benchmarks better predict inline suggestion quality than autonomous task completion.

Real-world accuracy patterns:

Interpretation guidance: Benchmark scores indicate ceiling performance under controlled conditions. Actual productivity impact depends on task distribution, codebase characteristics, and how well the tool matches your workflow patterns.

Synthesis:

Direct licensing costs:

Team cost scenarios:

Hidden costs matter:

Teams using cli tools extensively may find Claude Code’s terminal agent more accessible option despite the learning curve.

Developer resistance challenge: Teams using VS Code or JetBrains resist switching to Cursor’s standalone ide, even though it’s a vs code fork with a familiar interface. Exporting configurations, adjusting plugin sets, and changing muscle memory creates friction that individual developers often avoid.

Solution:

Code privacy challenge: All three tools process code through external ai models, raising IP protection concerns. Different tools offer different guarantees about data retention and model training.

Solution:

The brutal truth: These tools report adoption metrics—suggestions accepted, completions generated, features used—but none tell you their actual impact on your DORA metrics. License adoption doesn’t equal delivery speed improvement.

Solution:

Specific measurement approaches (pros and cons of relying on DORA alone):

Tool choice depends on team size, existing IDE preferences, and the complexity distribution of your codebase work. GitHub copilot vs cursor vs claude code isn’t a simple “best tool” question—it’s a workflow fit question requiring measurement to answer definitively.

The game changer isn’t choosing the right answer among these other tools—it’s implementing measurement infrastructure to track actual engineering impact rather than license deployment counts. Without that measurement, you’re guessing at ROI rather than proving it.

Related topics worth exploring: AI-assisted coding impact and best practices, engineering intelligence platforms for DORA metrics tracking, AI code review automation, and hybrid tool strategies for different tasks across your organization.

Which AI coding tool has the best ROI for engineering teams?

ROI depends on three factors: team size, codebase complexity, and measurement infrastructure. For enterprise teams prioritizing governance and minimal disruption, GitHub Copilot typically delivers fastest time-to-value. For teams doing heavy refactoring, Cursor’s multi-file capabilities justify the IDE migration cost. For complex architectures or legacy systems, Claude Code’s context window provides unique capabilities. Without measuring actual DORA metric impact, ROI claims remain speculative.

Can you use multiple AI coding tools together effectively?

Yes, hybrid approaches are increasingly common. Many teams use GitHub Copilot for daily inline suggestions, Cursor for complex refactoring sessions, and add claude code for architectural analysis or debugging multi step problems. The key is matching each tool to specific task types rather than forcing single-tool standardization, drawing on broader AI coding assistant evaluations and developer productivity tooling strategies.

How do you measure if AI coding tools are actually improving delivery speed?

Focus on DORA metrics: deployment frequency, lead time for changes, change failure rate, and mean time to recovery. Track these metrics before AI tool adoption, then measure changes over 30-90 day periods. Compare PR cycle times for AI-influenced commits versus non-AI commits. Engineering intelligence platforms like Typo provide this measurement across all three tools, and resources such as a downloadable DORA metrics guide can help structure your approach.

Which tool is best for teams using legacy codebases?

Claude Code’s 1M token context window makes it uniquely capable of reasoning across very large codebases without losing context. It can analyze entire codebases that would exceed other tools’ limits. For legacy systems requiring understanding of interconnected components across hundreds of files, this context advantage is significant.

What’s the difference between AI code completion and autonomous coding?

Code completion provides inline suggestions as you write code—high frequency, immediate, minimal disruption. Autonomous coding executes entire tasks from plain language descriptions, making multi file changes, generating api endpoints, or refactoring components. Completion optimizes flow state for solo developer work; autonomous agents leverage AI for complex tasks that would otherwise require hours of manual effort.

How do enterprise security requirements affect tool choice?

GitHub Copilot Enterprise offers the most comprehensive compliance features: SOC 2 certification, IP indemnification, organizational policy controls, and explicit guarantees about code not being used for model training. Cursor’s enterprise features are less publicly documented. Claude Enterprise offers compliance plans but terminal-based workflows may require additional security review. Response cancel respond policies and data retention terms vary by tier—evaluate enterprise agreements carefully.

PR cycle time measures the duration from pull request creation to merge into the main branch—and it’s the most actionable metric engineering leaders can move quickly. Code review cycle time, specifically, is the period from when a pull request is submitted until it is merged, serving as a critical indicator of development velocity and team collaboration efficiency. Elite teams achieve total cycle times under 24 hours, while median performers take 2-5 days. That gap represents days of delayed features, slower feedback loops, and compounding context switching costs across your entire development pipeline. High code review cycle times often indicate communication gaps, unclear requirements, or overburdened reviewers, while consistently low cycle times suggest efficient collaboration and well-defined review processes.

This guide covers how to measure PR cycle time components, break down the different phases of the cycle, interpret benchmarks for your team size, diagnose root causes of delays, and implement proven reduction strategies. As an essential part of DORA metrics for engineering performance, understanding PR cycle time is crucial for evaluating engineering team performance and efficiency and for appreciating why PR cycle time is often a better metric than velocity. The target audience is engineering managers, VPs of Engineering, and team leads managing 5-50 developers who want to accelerate their software development process without sacrificing code quality.

The short answer: Reduce PR cycle time through smaller PRs (< 200 lines), automated triage and reviewer assignment, clear code ownership, and AI-powered pre-screening that catches issues before human reviewers engage. Keeping pull requests small and manageable is key—research shows that PRs with over 200 changes often deter reviewers, while smaller PRs lead to quicker, more effective code reviews.

By the end of this guide, you will:

PR cycle time, often referred to as code review cycle time, is the total elapsed time from when a pull request is opened until it successfully merges into the main branch. This key metric measures the duration of the code review process and is central to DORA’s Lead Time for Changes—one of the four key metrics that distinguish elite engineering organizations from average performers. Understanding cycle time vs lead time within DORA metrics clarifies how PR cycle time fits into broader delivery performance. Code review cycle time can be broken down into different phases, such as initial development, waiting time, and review, to pinpoint where delays occur and optimize each segment for efficiency.

Understanding cycle time requires breaking it into these distinct phases, because the interventions for each are different. Tracking other pull request metrics—like PR Pickup Time and PR size—alongside PR review time and overall cycle time helps teams identify bottlenecks and target improvements more effectively. A team with high PR pickup time needs different solutions than one with slow merge times. High cycle time is often a sign of inefficiency and can indicate hidden problems within the workflow. Shorter PR cycle times usually indicate smoother workflows, while longer cycle times often signal hidden problems such as unclear ownership or overloaded reviewers.

PR pickup time measures the duration from PR creation until the first reviewer begins reviewing. This is the waiting period where new code sits idle, and it typically dominates overall cycle time.

In an analysis of 117,413 reviewed pull requests, median pickup time was approximately 0.6 hours—but the P90 (slowest 10%) reached 128.9 hours. That’s over five days of waiting before anyone even looks at the code change.

High PR pickup time correlates directly with reviewer availability, team awareness of pending reviews, and lack of automated assignment. When it’s unclear who should review a PR, developers passively wait for someone else to pick it up. Ensuring the team is promptly notified when a PR exists is essential to avoid unnecessary delays and keep the workflow moving.

Managing review requests and making sure PRs are reviewed in a timely manner is crucial for reducing pickup time. Dashboards that track pending review requests and highlight bottlenecks can help teams respond faster and improve overall PR cycle time.

Review time covers the active period from first review through final approval. This includes reading code, providing feedback, waiting for author responses, and iterating through review rounds. Code reviews are an essential process for maintaining code quality and delivery speed, but complex PRs can significantly increase review time due to the additional effort required to understand and assess them.

Key factors affecting review duration include pull request size, code complexity, and reviewer experience with the codebase. Large pull requests take exponentially longer—not just because there’s more code, but because reviewers defer them, requiring more context switching when they finally engage. Common causes of long PR cycle times include large pull requests, unclear ownership, and overloaded reviewers, which can create bottlenecks in the review process.

The tradeoff between review depth and speed is real. Teams must decide how much scrutiny different types of changes warrant. A one-line configuration fix shouldn’t require the same review process as complex changes to core business logic.

Merge time is the interval from final approval to actual merge into the main branch. This phase is often overlooked, but in the same GitHub dataset, P90 merge delay reached 19.6 hours.

Technical factors driving merge time include CI/CD pipeline duration, merge conflicts with other branches, required compliance checks, and branch policies that restrict merge windows. Teams with long-running test suites or manual deployment gates see this phase balloon.

Understanding each component matters because you can’t fix what you don’t measure. A team might assume review quality is the problem when actually their developers are waiting days for the first comment. The next section establishes benchmarks so you can identify where your team falls.

Industry benchmarks provide context for your team’s performance, but they require interpretation based on your specific situation. Metrics like code review cycle time and other pull request metrics—such as PR Pickup Time, overall cycle time, and PR size—are important benchmarks for assessing team performance and identifying bottlenecks in your workflow, especially when you follow the dos and don'ts of using DORA metrics effectively. A 24-hour cycle time means something different for a 5-person startup versus a 50-person team in regulated fintech. Frequent measurement of key performance indicators (KPIs) helps teams understand which strategies are effective in reducing PR cycle time and optimizing development velocity.

Based on aggregated data from DORA reports, Typo and CodePulse research, code review cycle time benchmarks break down as follows:

For teams of 5-50 engineers specifically: elite performers achieve under 12-24 hours total code review cycle time, with first review happening within four hours during business hours.

The median reviewed PR on public GitHub takes approximately 3 hours total—but P90 reaches 149 hours. That spread indicates most PRs move quickly, but a significant tail of delayed reviews drags down team velocity.

Effective measurement requires tracking each phase separately rather than just total duration. Breaking down the process into different phases enables more targeted improvements:

Tracking other pull request metrics such as PR Pickup Time and PR size alongside these phases provides additional insight into where delays or inefficiencies occur in the pull request process.

Consider business hours versus calendar time. An 18-hour cycle time that spans overnight isn’t the same as 18 hours during working hours. Some tools normalize for this; others require manual interpretation.

Typo surfaces real-time PR analytics that break down these components automatically, helping engineering leaders identify bottlenecks without manual data collection. The platform tracks cycle time trends across teams and repos, flagging when metrics drift outside acceptable ranges and making it easier to track and improve DORA metrics across your SDLC.

Benchmarks shift based on team composition and business context:

Small teams (5-10 engineers): Expect shorter cycle times due to higher code familiarity and simpler coordination. Target <4 hours for elite performance.

Medium teams (10-50 engineers): Coordination overhead increases. Target <24 hours for strong performance. Cross-team reviews and code ownership complexity require explicit processes.

Regulated industries: Compliance requirements, security reviews, and audit trails legitimately extend cycle time. Focus on reducing variance and eliminating unnecessary delays rather than hitting startup-speed benchmarks.

High-risk code changes: Critical paths warrant thorough review despite longer cycle times. The goal isn’t uniform speed—it’s appropriate speed for each type of change.

With benchmarks established, the next section covers specific interventions proven to reduce cycle time.

These strategies come from teams that have achieved measurable improvements—not theoretical best practices. Setting WIP limits and actively managing review PRs are proven methods to reduce PR cycle time, as they help prevent bottlenecks and maintain a steady workflow. Each intervention addresses specific phases of the PR cycle and includes implementation guidance. Effective PR teams can save up to 40% of their time by streamlining processes and eliminating bottlenecks through structured workflows.

Pull request size is the single strongest predictor of cycle time. Typo data shows small PRs get picked up 20× faster than large ones. The relationship is exponential, not linear. Complex PRs—those with many files changed or large code diffs—tend to slow down reviews, increase the risk of bugs, and create bottlenecks for both authors and reviewers.

Implementation steps:

For example, a team working on a major refactor initially submitted a single complex PR with over 1,000 lines changed. Reviewers hesitated to pick it up, and the PR sat idle for days. After splitting the work into five smaller PRs, each focused on a specific module, reviews were completed within hours, and feedback was more actionable.

Smaller PRs benefit everyone: authors get faster feedback, reviewers maintain focus without context switching overload, and the team catches issues earlier in the development process. Keeping pull requests small and manageable significantly enhances the likelihood of timely reviews, as large or complex PRs often deter reviewers and delay progress.

When it’s unclear who should review a PR, it sits in limbo. Automated assignment eliminates this ambiguity and ensures that every team member is promptly notified when a PR exists, reducing the risk of overlooked or stalled pull requests. Managing review requests effectively—by tracking pickup times and monitoring pending review requests—helps teams identify bottlenecks and maintain steady progress.

Implementation steps:

Clear expectations around response times eliminate ambiguity and ensure reviews and merges are completed in a timely manner, which is essential for maintaining workflow efficiency and reducing waiting.

Supporting practices:

Async norms work because they remove negotiation overhead. Reviewers know what’s expected; authors know when to escalate, helping the team consistently complete reviews and merges in a timely manner.

AI-powered pre-screening represents the largest recent advancement in reducing cycle time. These tools act as a first reviewer, catching issues before human reviewers engage and transforming how AI is used in the code review process.

Atlassian’s internal deployment of their AI code review agent reduced PR cycle time by approximately 45%. Their median time from open to merge had crept above 3 days, with pickup waits averaging 18 hours. After implementing AI pre-screening, the wait for first feedback dropped to effectively zero.

How AI code review helps:

Typo customers have seen substantial improvements: StackGen achieved 30% reduction in PR review time, and JemHR improved PR cycle time by 50%. These gains come from reducing review iterations—AI code reviews catch what would otherwise require human feedback rounds.

The balance between automation and human judgment matters. AI handles mechanical checks; humans focus on architecture, logic, and maintainability. This division makes both more effective.

Even teams committed to improvement hit obstacles. These are the most frequent bottlenecks and proven solutions. Setting WIP limits helps manage work-in-progress and prevent bottlenecks, while tracking other pull request metrics—such as PR Pickup Time, cycle time, and PR size—enables teams to monitor and optimize the entire pull request process. Additionally, mapping workflows visually, creating standard operating procedures (SOPs), and implementing a RACI matrix are effective strategies for improving PR processes and reducing cycle time.

Problem: Senior engineers become bottlenecks, reviewing most PRs while their queues grow.

Solution: Implement load balancing across team members. Cross-train developers on different code areas so multiple people can approve in each subsystem. Track review distribution metrics and adjust when imbalance appears.

Problem: PRs sit waiting because no one knows who should review them.

Solution: CODEOWNERS files combined with automated assignment rules. Define clear escalation paths for when owners are unavailable. Every directory should have at least two qualified reviewers.

Problem: Constant PR notifications interrupt deep work, leading developers to ignore them entirely.

Solution: Batch review sessions instead of interrupt-driven reviews. Configure intelligent notification filtering that surfaces urgent items while batching routine reviews. Some teams find dedicated “review o’clock” times effective.

Problem: Some changes genuinely can’t be decomposed easily, especially migrations or refactoring.

Solution: Establish different review processes for known-large changes. Use incremental migration strategies where possible. When large PRs are unavoidable, schedule dedicated review time with appropriate reviewers rather than expecting async turnaround.

Reducing PR cycle time requires a systematic approach across three dimensions: controlling PR size, automating triage and initial review, and establishing clear team processes. The teams seeing 30-50% improvements aren’t doing one thing differently—they’re applying multiple interventions that compound.

Immediate next steps:

Related areas to explore: Overall DORA metrics optimization connects PR cycle time to broader delivery performance, including CI/CD optimization using DORA metrics. Developer experience measurement helps identify whether cycle time improvements translate to actual productivity gains. Understanding how AI coding tools impact your metrics ensures you’re measuring what matters as development practices evolve.

See PR Analytics in Typo to track cycle time components and identify bottlenecks in real time. The platform surfaces where your team loses time across the entire code review process, enabling targeted interventions rather than guesswork.

What’s the difference between PR cycle time and lead time for changes?

PR cycle time measures from pull request creation to merge. DORA’s Lead Time for Changes spans from first commit to running in production—a broader measure that includes time before PR creation and deployment time after merge. PR cycle time is a subset of lead time and typically the most actionable component for engineering teams to improve when you are mastering the art of DORA metrics.

How do I convince my team to keep PRs smaller without sacrificing quality?

Frame it as reducing cognitive load, not cutting corners. Smaller PRs get faster, more thorough reviews because reviewers can actually focus. Share data: PRs under 200 lines get reviewed 20× faster. Start with guidelines rather than hard limits, and celebrate examples of good decomposition. Feature flags enable shipping incomplete features safely, removing the pressure to batch everything into large PRs.

Should we prioritize speed over thorough code review?

No—but the framing is misleading. Smaller PRs enable both speed and thoroughness. A reviewer spending 20 focused minutes on a 100-line PR catches more issues than spending 90 distracted minutes on a 500-line PR. Optimize for review quality per line of code, not absolute time spent. Reserve intensive review for high-risk changes; routine changes can move faster.

How does AI code review impact overall cycle time?

AI code review primarily reduces pickup time (providing instant first feedback) and review iterations (catching issues authors would otherwise need to fix after human review). Atlassian saw 45% cycle time reduction; Exceeds AI data shows PRs with AI assistance close in 2.1 days versus 4.2 days without. The tradeoff: some research indicates AI-assisted PRs may have higher defect density, so human review remains essential for complex changes.

What’s a realistic target for teams just starting to optimize PR cycle time?

Start with reducing time to first review by 25% and ensuring 80%+ of PRs stay under 200 lines. For a team currently at 3-5 day cycle times, target reaching <48 hours within a quarter. Elite performance (<12 hours) typically requires multiple optimization cycles. Focus on consistency before speed—reducing variance in your slowest PRs often matters more than improving your already-fast ones.

In 2026, organizations across manufacturing, IT, and product development face mounting pressure to deliver more value with fewer resources. Throughput metrics have become the universal language for quantifying exactly how much value—whether units, tasks, transactions, or data—a system delivers per unit of time. Understanding throughput is no longer optional; it’s the foundation of operational efficiency and competitive advantage.

Throughput metrics are typically tracked over a certain period, such as daily or weekly intervals, to analyze and optimize efficiency.

This guide is intended for operations managers, software development leads, IT professionals, and anyone responsible for optimizing system performance or delivery processes. Tracking key metrics is essential for monitoring system performance and identifying opportunities to improve throughput.

This article covers throughput metrics across three critical domains: manufacturing operations, Agile and Kanban workflows in software development processes, and system performance including network and load testing. You’ll learn core formulas with practical examples, discover how to calculate throughput in different contexts, and understand how to interpret throughput data alongside related metrics like cycle time, lead time, and bandwidth.

Throughput refers to the number of completed units of output delivered per defined period.

Throughput metrics measure the rate at which a system processes, completes, or delivers work within a specific timeframe. Throughput is the amount of data or transactions a system processes within a defined time frame under specific conditions. Throughput is the amount of a product that a company can produce and deliver within a set time period. Throughput measures how quickly and efficiently an organization can deliver products, services, or completed work to meet customer demands.

Whether you’re measuring products per hour, stories per sprint, or requests per second, the fundamental concept remains consistent: throughput quantifies your system’s actual delivery rate.

The basic formula is straightforward:

Throughput = Number of Completed Units / Time Period

The critical distinction here is that “completed units” must represent actual value delivered—sold products, deployed features, or successfully processed requests—rather than merely work started. A chair manufacturer with 100 chairs in their production process and an average flow time of 10 days has a throughput of 10 chairs per day, regardless of how many units are still being assembled.

Because throughput is a rate (not a raw count), it’s sensitive to both volume and time. This characteristic makes measuring throughput central for capacity planning, allowing teams to forecast how many units or tasks they can realistically deliver within a given period.

While the mathematical definition of throughput remains consistent, its practical interpretation varies across industries:

These variations share a common thread: throughput always answers “how many units of value does this process deliver per unit of time?”

Throughput rarely tells the complete story on its own. To gain valuable insights into team performance and organizational performance, you need to analyze throughput alongside other key flow metrics that capture different dimensions of system behavior.

Throughput metrics gained strategic prominence through the Theory of Constraints (TOC), pioneered by Eliyahu Goldratt in his 1984 novel “The Goal.” TOC positions throughput as the primary measure of system success, with all performance ultimately limited by a single constraint or bottleneck.

Consider a factory capable of assembling 500 units daily, yet shipping only 350 units due to final inspection capacity limits. This throughput analysis immediately highlights where management should focus improvement efforts. Organizations use throughput per shift, per day, or per week as a governance metric in manufacturing, logistics, and warehouse operations to identify areas requiring intervention.

In software development and knowledge work, throughput tracks completed work items—user stories, tasks, bugs, or features—over a sprint or week. This performance metric provides a count-based view of delivery capacity that supports forecasting and process stability assessment.

Consider two teams with similar velocity of 40 story points per sprint. Team A completes 8 large items while Team B completes 16 smaller items. Differences in how teams estimate work—such as whether they rely more on story points vs hours for estimation—also influence how throughput and velocity trends are interpreted. Team B’s higher throughput typically indicates better predictability and more frequent customer feedback—demonstrating why throughput matters for agile project management.

Kanban tools commonly visualize throughput using specialized charts that reveal patterns invisible in raw numbers:

A typical pattern might show a team whose throughput centers around 6-8 items/day under normal conditions but occasionally spikes to 15 items immediately following big releases when accumulated items flow through to completion. Recognizing these patterns enables better sprint planning and resource allocation.

In Scrum, throughput measures completed Product Backlog Items per sprint, regardless of story point estimates. This simplicity makes it powerful for tracking team’s throughput over time, especially when complemented with DORA metrics to improve Scrum team performance.

Example progression:

Best practices for Scrum throughput:

In performance testing, throughput measures transactions processed per second or minute under specific load conditions. This metric is central to validating that systems can handle expected—and unexpected—traffic volumes.

Test reports typically present time-series throughput graphs, helping teams identify at what user load throughput plateaus and correlate performance degradation with specific system components.

Network throughput represents actual volume of data successfully delivered over a link per second, while bandwidth defines maximum theoretical capacity. Understanding this distinction is crucial for realistic capacity planning.

Engineers read throughput graphs during incident analysis to pinpoint whether network capacity, application logic, or backend systems are causing degradation.

This section provides ready-to-use formulas for typical contexts along with guidance on interpretation and common pitfalls.

Operations/Manufacturing:

Agile/Kanban:

Performance Testing:

Financial/Healthcare:

Interpretation guidance:

Throughput measurement tools play a key role in helping organizations achieve operational efficiency by providing the data and insights needed to calculate throughput, analyze performance, and identify bottlenecks across workflows. By leveraging these tools, teams can visualize throughput, track progress on tasks, and pinpoint areas where efficiency can be improved.

Time tracking software is a foundational tool for measuring how long tasks and projects take to complete. By capturing detailed throughput data, these tools enable teams to analyze throughput trends, identify areas where work slows down, and make informed decisions to optimize productivity.

Project management platforms such as Asana, Trello, and Jira are widely used to manage workflows, monitor work in progress, and track completed items over a set timeframe. These tools not only help teams calculate throughput but also provide valuable insights into team performance, allowing managers to identify bottlenecks and allocate resources more effectively. By visualizing throughput and work completed, organizations can quickly spot inefficiencies and implement targeted improvements.

Analytics software, including solutions like Google Analytics and Mixpanel, extends throughput measurement to digital environments. These tools help organizations analyze throughput in terms of website traffic, user actions, and conversion rates, offering a data-driven approach to optimizing digital processes and increasing throughput.

In supply chains and logistics, specialized supply chain management software is essential for tracking inventory, monitoring the flow of raw materials, and managing the production process. These tools help organizations identify areas where wait time or processing time limits throughput, enabling more efficient sourcing of raw materials and smoother delivery of finished goods.

By integrating these throughput measurement tools into their operations, organizations gain the ability to continuously monitor, analyze, and improve throughput. This leads to greater efficiency, higher productivity, and a more agile response to changing business demands.

Consistently tracking throughput gives organizations a quantitative basis for improvement decisions rather than relying on intuition or anecdotes. The benefits span operational, financial, and customer-facing dimensions.

These benefits apply across functions: operations teams use throughput for scheduling, engineering teams for sprint planning, and finance teams for margin analysis.

Improving throughput isn’t simply about working faster—it requires systematically removing constraints and reducing waste. The flow rate through any system depends on its weakest link, so indiscriminate effort often yields minimal results.

Any throughput increase must be balanced with quality and risk management. Track defect rates, error percentages, and customer complaints alongside throughput to ensure speed doesn’t compromise value. Maintaining high throughput means nothing if quality degrades.

Organizations seeking to maximize throughput and maintain high operational efficiency often adopt proven methodologies and frameworks that focus on continuous improvement, reducing bottlenecks, and increasing productivity. These organizational approaches are designed to optimize the flow of work, streamline processes, and ensure that resources are used as efficiently as possible.

Lean manufacturing is a widely adopted approach that emphasizes the elimination of waste, reduction of variability, and improvement of process flow. By focusing on value-added activities and systematically removing inefficiencies, Lean helps organizations increase throughput and deliver more value with fewer resources.

Agile project management is another powerful strategy, particularly in software development and knowledge work. By breaking down large projects into smaller, manageable tasks and prioritizing work based on customer value, Agile teams can improve throughput, adapt quickly to change, and foster a culture of continuous improvement. Regular retrospectives and iterative planning help teams identify bottlenecks and implement targeted improvements to their workflows.

Total Quality Management (TQM) takes a holistic approach to improving throughput by engaging employees at all levels in the pursuit of quality and efficiency. TQM emphasizes continuous improvement, data-driven decision-making, and a strong focus on customer satisfaction. By embedding quality into every stage of the production process, organizations can reduce rework, minimize delays, and increase overall throughput.

Just-in-time (JIT) production is a strategy that aligns production schedules closely with customer demand, minimizing inventory and reducing wait times. By producing and delivering products only as needed, organizations can optimize throughput, reduce excess work in progress, and respond more flexibly to market changes.

By implementing these organizational approaches, companies can systematically improve throughput, reduce bottlenecks, and drive ongoing improvements in efficiency and productivity. These strategies not only enhance team performance and project management outcomes but also position organizations for long-term success in competitive markets.

Throughput can mislead when measured incorrectly or incentivized poorly. Awareness of common pitfalls helps teams avoid optimizing for the wrong outcomes.

In one documented case, throughput-tied bonuses led a team to fragment large projects into dozens of tiny tickets, technically increasing throughput while delaying actual project completion by weeks. The lesson: throughput incentives must align with customer value, not just item counts.

Throughput metrics, when clearly defined and consistently measured, provide a powerful lens on system performance across manufacturing, Agile delivery, and IT operations. From how many units a factory ships daily to transactions processed by financial systems per second, throughput answers the fundamental question of delivery capacity.

The most effective use of throughput combines:

Industry leaders in 2026 leverage throughput metrics not just for reporting, but for probabilistic forecasting, constraint identification, and continuous improvement. Real-time dashboards in manufacturing execution systems and Kanban tools provide immediate visibility, while AI-driven simulations enable more sophisticated planning than simple averages allow.

Start by mapping your current process and identifying the single biggest constraint limiting your throughput today. Implement basic throughput tracking with consistent definitions and measurement periods. As your data matures, incorporate other metrics and move toward more advanced analyses. Building resilient, scalable, and customer-centric operations requires exactly this kind of quantitative foundation—and throughput metrics provide the starting point.

The full development cycle, commonly referred to as the Software Development Life Cycle (SDLC), is a structured, iterative methodology used to plan, create, test, and deploy high-quality software efficiently at a low cost. The SDLC consists of several core stages, also known as common SDLC phases and key phases: planning, design, implementation, testing, deployment, and maintenance. Each of these phases plays a critical role in the software development process, serving as essential checkpoints that contribute to quality and project success.

After understanding the phases, it’s important to recognize the variety of SDLC models available. Common SDLC models include the Waterfall model (a linear, sequential approach best for small projects), the Agile model (an iterative, flexible methodology emphasizing collaboration and customer feedback), the V-shaped model (which focuses on validation and verification through testing at each stage), the Spiral model (which combines iterative development with risk assessment), and the RAD (Rapid Application Development) model (which emphasizes quick prototyping and user feedback). Choosing the right SDLC model depends on the software project’s requirements, team structure, and complexity, especially for complex projects.

The full development cycle refers to managing a software product’s entire process and full life cycle through a structured SDLC process that maintains team continuity and a unified project vision. This approach is central to custom software development and full cycle development, where the same project team is engaged throughout the software development lifecycle. A full cycle developer is involved in all stages of the software development process, ensuring seamless workflow, clear communication, and comprehensive responsibility for project success. Unlike segmented or sprint-based development, full-cycle software development services ensure no interruptions during the development cycle, leading to faster time-to-market, better budget management, and cost-effectiveness.

Full-cycle software development is also ideal for MVP development, as it allows for planning all steps in advance and gradual implementation. This is particularly beneficial for complex projects, as it allows for comprehensive planning, risk management, and proactive problem-solving. The consistency of engaging the same team throughout the entire process enhances communication, collaboration, and the quality of the final product. A unified dev team boosts developer productivity and operational efficiency, empowering the team to deliver better results and reduce burnout.

Why does this matter? With fast-changing market demands and high customer expectations, managing the entire lifecycle allows faster response to change, better alignment to business objectives, and improved quality assurance. Effective project management in a software project includes monitoring & controlling, risk management, and maintaining cost & time efficiency through detailed planning and improved visibility, all of which contribute to effective software delivery across the SDLC. Improved visibility and efficiency in SDLC keeps stakeholders informed and streamlines project tracking.

Organizations using fragmented approaches often accumulate significant technical debt because early decisions in system architecture, security, and user experience suffer when later teams lack context from previous development stages. Effective communication among team members and full cycle developers further enhances workflow efficiency and project success, particularly when supported by well-chosen KPIs for software development team success that align everyone on shared outcomes.

Risk management in SDLC detects issues early, mitigating potential security or operational risks, especially when teams follow well-defined software development life cycle phases with clear deliverables and review points. Additionally, SDLC addresses security by integrating security measures throughout the entire software development life cycle, not just in the testing phase. Approaches such as DevSecOps incorporate security early in the process and make it a shared responsibility, ensuring a proactive stance on security management during SDLC from initial design to deployment.

The development cycle, often referred to as the software development life cycle (SDLC), is a structured process that guides development teams through the creation of high quality software. By following a systematic approach, the SDLC ensures that every stage of software development—from initial planning to final deployment—is carefully managed to meet customer expectations and business goals. This life cycle is designed to bring order and efficiency to software development, reducing risks and improving outcomes. Each phase of the development cycle plays a vital role in shaping the software development life, ensuring that the final product is robust, reliable, and aligned with user needs. By adhering to a structured process, organizations can deliver software that not only functions as intended but also exceeds customer expectations throughout its entire life cycle.

The Development Life Cycle SDLC is the backbone of a successful software development process, providing a systematic framework that guides teams from concept to completion. By breaking down the software development process into distinct, interconnected phases—such as planning, design, implementation, testing, deployment, and maintenance—the SDLC process ensures that every aspect of the project is carefully managed and aligned with customer expectations. This structured approach not only helps development teams produce high quality software, but also enables them to anticipate challenges, allocate resources efficiently, and maintain a clear focus on project goals throughout the life cycle. By adhering to the development life cycle SDLC, organizations can deliver software that is reliable, scalable, and tailored to meet the evolving needs of users, ensuring long-term success and satisfaction.

A streamlined workflow is the backbone of an effective software development life cycle. In full cycle software development, the development team benefits from a clearly defined process where each stage—from planning through deployment—is mapped out and responsibilities are transparent. This clarity allows the team to collaborate efficiently, minimizing bottlenecks and ensuring that every member knows their role in the development cycle. By maintaining a structured workflow, the development process becomes more predictable and manageable, which is essential for delivering high quality software that aligns with customer expectations. Project management plays a pivotal role in this, with methodologies like agile and Lean development practices for SDLC helping teams adapt quickly to changes and stay focused on their goals, and with resources on engineering data management and workflow automation further supporting continuous improvement. Ultimately, a streamlined workflow supports the entire life cycle, enabling the development team to deliver consistent results and maintain momentum throughout the software development life.

The planning and requirement gathering phase is the cornerstone of a successful software development life cycle. During this stage, the development team collaborates closely with stakeholders—including customers, end-users, and project managers—to collect and document all necessary requirements for the software project. This process results in the creation of a comprehensive software requirement specification (SRS) document, which outlines the project scope, objectives, and key deliverables. The SRS serves as a roadmap for the entire development process, ensuring that everyone involved has a clear understanding of what needs to be achieved. In addition to defining requirements, the planning phase involves careful risk management, accurate cost estimates, and strategic resource allocation that directly influence developer productivity throughout the project. These activities help the team assess project feasibility and set realistic timelines, laying a solid foundation for the rest of the software development life, including planning for effective code review best practices that will support code quality later in the cycle. By investing time and effort in thorough planning, development teams can minimize uncertainties and set the stage for a smooth and successful project execution.

The Design Phase is a pivotal part of the software development life cycle, where the vision for the software begins to take concrete shape. During this stage, software engineers use the insights gathered during the planning phase to craft a detailed blueprint for the software product. This involves selecting the most appropriate technologies, development tools, and considering the integration of existing modules to streamline the development process. The design phase also addresses how the new solution will fit within the current IT infrastructure, ensuring compatibility and scalability. The result is a comprehensive design document that outlines the software’s architecture, user interfaces, and system components, serving as a roadmap for the implementation phase. By investing in a thorough design phase, development teams lay a strong foundation for the entire development process, reducing risks and setting the stage for a successful software development life.