This article is for software developers, project managers, and technical leads who want to understand the SDLC coding phase to ensure efficient, high-quality software delivery. The SDLC coding phase is the stage in the Software Development Life Cycle (SDLC) where your project transitions from design documents to actual, working software. If you’re searching for information about the SDLC coding phase, this guide confirms the topic and provides a comprehensive overview of what happens during this critical stage, who is involved, and which best practices and tools are essential for success.

The SDLC coding phase is when developers convert software design into code, following best practices such as adhering to coding standards, using version control, conducting code reviews, writing clean and maintainable code, ensuring modularity for scalability, performing unit testing, documenting code, and leveraging CI/CD for automation.

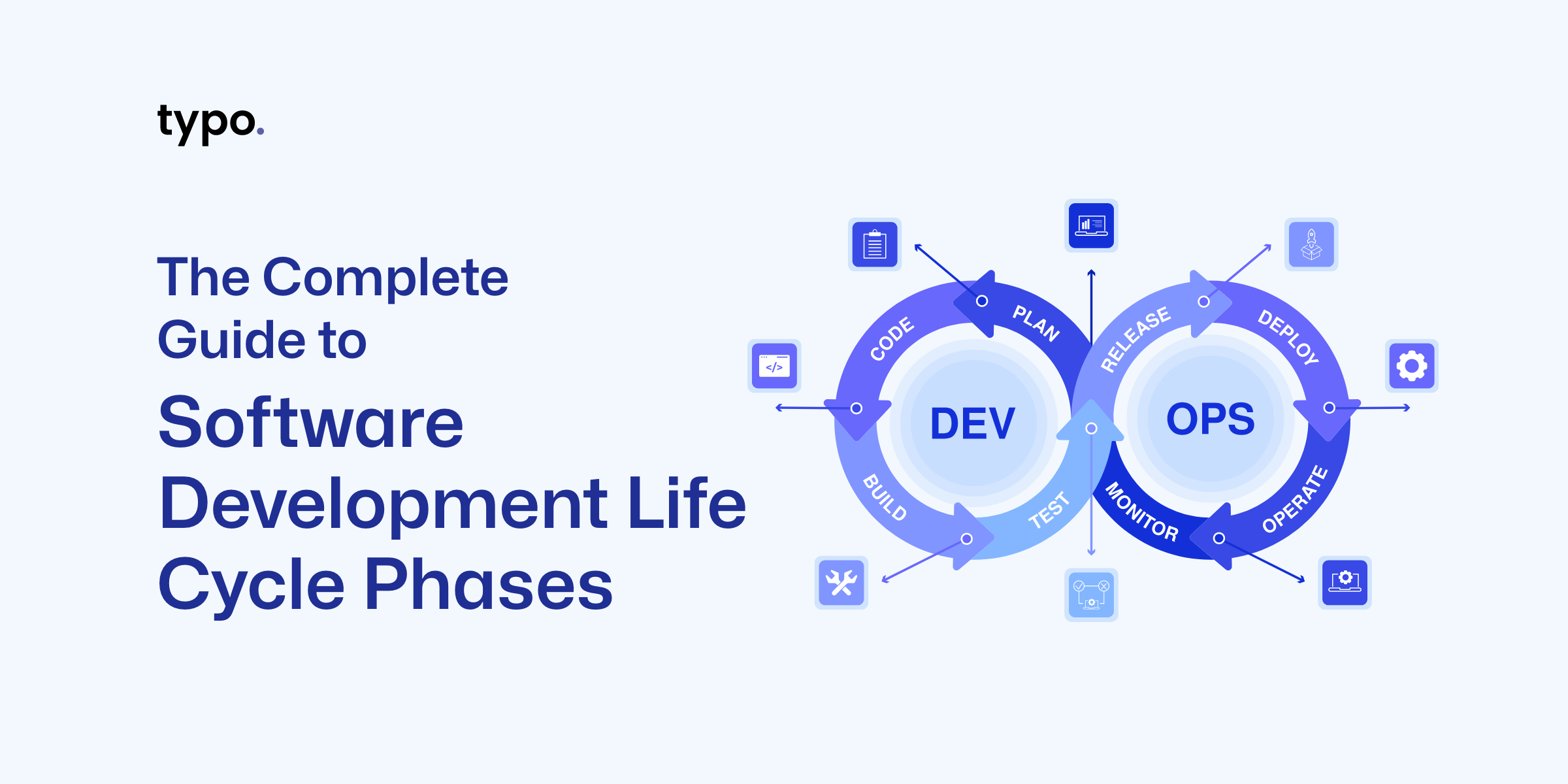

The Software Development Life Cycle (SDLC) consists of seven essential phases: Planning, Requirements Analysis, Design, Coding, Testing, Deployment, and Maintenance. The SDLC is the backbone of modern software development, providing a structured approach for development teams to transform ideas into high quality software products. The SDLC outlines a series of well-defined phases—planning, requirements gathering, design, coding, testing, deployment, and maintenance—that guide the software development process from start to finish. By following the development life cycle SDLC, organizations can manage complexity, align with business objectives, and ensure that the final product meets user expectations.

A disciplined SDLC helps development teams minimize risks, control costs, and deliver reliable software that stands up to real-world demands. Whether you’re building a new SaaS platform or enhancing an existing system, a robust software development life cycle ensures that every stage of the development process is accounted for, resulting in software that is both functional and maintainable throughout its software development life.

With a clear understanding of the SDLC’s structure, let’s explore the different models used to implement these phases.

Selecting the right SDLC model is a critical decision that shapes the entire software development process. There are several popular SDLC models, each designed to address different project needs and team dynamics:

Choosing the right SDLC model depends on factors like project complexity, team size, stakeholder involvement, and the need for adaptability. For example, the agile model is often preferred for complex projects where requirements may evolve, while the Waterfall model can be effective for projects with stable, well-understood requirements. Understanding the strengths and limitations of different sdlc models helps teams select the right SDLC methodology for their unique context.

With an understanding of SDLC models, let's focus on the coding phase and its role in the software development process.

The Coding phase in the Software Development Life Cycle (SDLC) is when engineers and developers start converting the software design into tangible code.

The coding phase transforms design artifacts—architecture diagrams, API contracts, and database schemas—into working software components. This is the development stage where abstract concepts become executable code that users can interact with. At this stage, developers translate the system design into actual code, ensuring that the software functions as intended. The coding phase focuses on transforming key components of the system design into reliable, maintainable, and efficient working software.

Key activities during the SDLC coding phase include adhering to coding standards, utilizing version control, and conducting thorough AI-assisted code reviews to ensure quality.

Before writing code begins, the coding phase depends on validated requirements and approved designs. During the Coding phase, developers use an appropriate programming language to write the code, guided by the Software Design Document (SDD) and coding guidelines. Software developers need clear inputs: system architecture documentation, data flow diagrams, API specifications, and detailed component designs. Without these, teams risk building features that don’t match project requirements.

Once implementation wraps up, the coding phase feeds directly into the testing phase and deployment phase through:

The development phase serves dual purposes. It’s both a production step where software development teams write code and a critical feedback point. During implementation, developers often discover design gaps, requirement ambiguities, or technical constraints that weren’t visible during planning. This makes the coding phase essential for risk assessment and continuous improvement throughout all SDLC phases.

Now that we’ve defined the coding phase and its importance, let’s look at how to prepare for successful implementation.

Strong preparation during late design and early implementation reduces costly rework. For projects kicking off in Q1 2025, getting this foundation right determines whether your team delivers high quality software on schedule.

Before any developer opens their IDE, these artifacts must exist:

Development teams need alignment on how they’ll work together:

Before coding starts, every developer needs:

A team is “ready to code” when any developer can clone the repository, run the build, and execute tests within 30 minutes of setup.

With preparation complete, let’s examine the core activities that define the coding phase.

The coding phase isn’t just writing code—it’s a structured set of activities spanning design refinement to integration. Software engineering practices have evolved significantly, and modern coding involves collaboration, automation, and continuous validation.

Typical development process tasks include:

The organization of these tasks can vary depending on the software development model chosen. Different software development models, such as Waterfall, Agile, or DevOps, influence how the coding phase is structured, managed, and integrated with other SDLC stages.

A typical daily developer workflow looks like this:

How coding is organized depends on your software development methodology:

Large features must be decomposed into manageable pieces. A feature like “User account management” planned for a 2025 release breaks down into:

Each component becomes a user story with acceptance criteria and technical subtasks tracked in project management tools like Jira, Azure Boards, or Linear.

Estimation practices help teams plan sprints effectively:

Project managers use these estimates to balance workload across the team and ensure the project scope remains achievable within the timeline, feeding directly into effective sprint planning and successful sprint reviews in Agile teams.

The tech stack is typically established during the design phase, but concrete framework choices often get finalized during coding. Teams evaluate options based on:

These aren’t exhaustive catalogs—the right choice depends on your project requirements and team capabilities and should align with broader SDLC best practices for software development.

Modern applications follow layered architectures that separate concerns:

When implementing a use case like “customer places an order on 1 July 2025,” developers translate requirements into concrete code:

Throughout this process, it is essential to validate the software's functionality to ensure that the implemented features meet user needs and perform efficiently, supported by practices such as static code analysis for early defect detection.

Design patterns support clean implementation:

This separation of concerns creates loosely coupled architecture where changes in one layer don’t cascade unpredictably through the system.

With the core activities outlined, let’s look at the tools and environments that support efficient coding.

Modern coding relies heavily on tooling for productivity, traceability, and software quality. The right tools can dramatically accelerate the software development process while maintaining code quality.

Reproducible environments matter. Using Docker Compose files, dev containers, or infrastructure-as-code ensures every developer works in conditions matching staging and the production environment, complementing collaborative workflows built around pull requests for code review and integration and AI-augmented remote code review practices.

Git-based workflows are central to the coding phase, enabling development and operations teams to work in parallel without conflicts.

GitFlow Strategy

Trunk-Based Development

A typical feature branch workflow:

Best practices include small, focused commits with clear messages, frequent integration to avoid merge conflicts, and branch protection rules preventing direct pushes to main.

CI servers automatically build and test code whenever developers push changes. Popular platforms include GitHub Actions, GitLab CI, Jenkins, and Azure DevOps, all of which are covered in depth in guides to the best CI/CD tools for 2024.

A typical CI pipeline executes these steps:

The benefit is earlier detection of integration issues. Teams catch broken builds, failing tests, and security vulnerabilities before code reaches shared branches—preventing the accumulation of defects that become expensive to fix bugs later.

CI connects the coding phase to subsequent SDLC steps like the corresponding testing phase and deployment, while keeping focus on developer workflows and fast feedback loops.

With the right tools in place, let’s examine how quality is built into the coding phase.

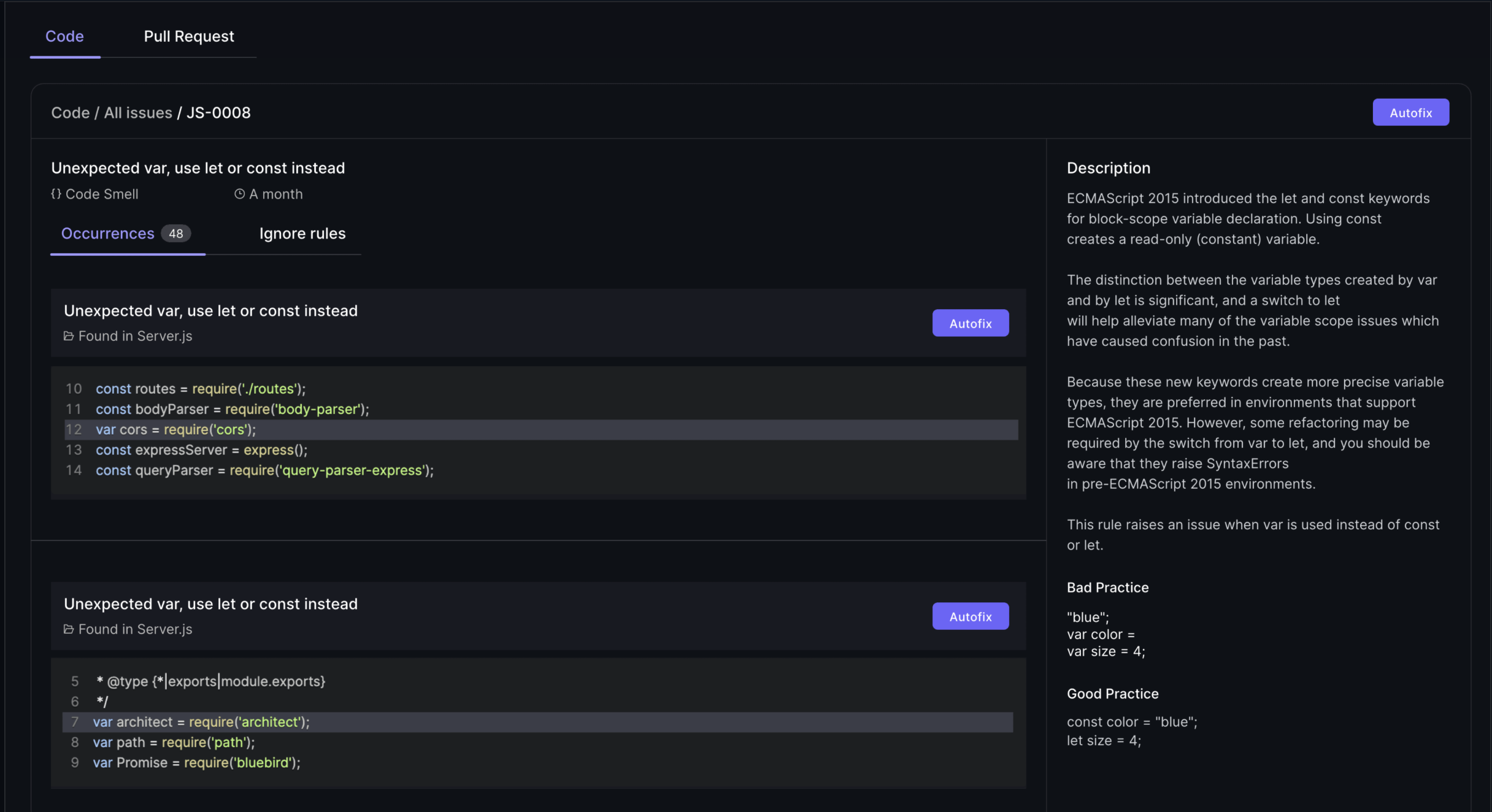

Much of software quality is built during coding, not only caught in later testing phases. Industry data shows that 70% of software failures trace back to poor coding standards, making quality practices during implementation essential.

Quality assurance activities embedded in coding include:

Organizations in 2024-2025 increasingly integrate security checks directly into coding workflows through DevSecOps practices. This includes SAST scanning and dependency vulnerability checks running automatically on every commit and reflects a broader shift toward an AI-driven SDLC across all lifecycle phases and the adoption of AI-powered developer productivity toolchains.

The pull request workflow is the primary mechanism for quality control and directly influences cycle time and pull request review duration:

Review criteria include:

Practical guidelines for effective reviews:

Research shows that implementing version control systems with proper review processes reduced merge conflicts by 70% in multi-team enterprise projects.

The “shift-left” approach means developers write tests alongside or before implementation, catching defects when they’re cheapest to fix. Studies indicate that unit testing during development yields 60-80% bug preemption before system testing.

Test types relevant to the coding phase:

High-quality code includes automated tests committed with the implementation. Teams should perform unit testing as part of their Definition of Done, targeting coverage above 80% for critical business logic.

Static analysis tools enforce coding standards and identify potential issues without running code:

Security-focused tools integrated into coding workflows, alongside broader code quality and maintainability practices, strengthen overall software resilience:

Security testing plays a critical role in the SDLC coding phase by identifying vulnerabilities such as system weaknesses, data breaches, and authentication flaws. It is integrated throughout development, often using automated processes like penetration testing and vulnerability scanning, and is a key part of DevSecOps practices.

These tools help teams address SDLC address security concerns early. Organizations define quality gates—minimum coverage percentages, zero critical vulnerabilities—that must pass before merging.

This approach helps deliver software that remains functional and secure throughout its lifecycle, supporting ongoing maintenance with fewer defects reaching the production environment. Documentation of code and architecture is necessary to ensure long-term maintainability.

With quality practices embedded, let’s see how AI and automation are reshaping the coding phase.

AI-assisted coding has become mainstream by 2024-2025, significantly impacting how software developers work. GitHub reports that tools like Copilot can automate approximately 40% of boilerplate code, freeing developers to focus on complex business logic and underscoring the need to follow AI coding impact metrics and best practices and assess top generative AI tools for developers.

AI capabilities in the coding phase include a growing ecosystem of AI coding assistants that boost development efficiency and AI-driven development platforms that unify engineering data and workflows:

Benefits are significant: faster delivery, reduced manual work on repetitive tasks, and accelerated onboarding for new team members, particularly in distributed teams that rely on AI-powered remote review workflows. However, risks require attention:

AI tools accelerate coding but don’t replace developer judgment. Every suggestion requires evaluation before integration.

Concrete scenarios where AI assists during the SDLC coding phase:

Generating Initial Implementations

A developer writes a function signature and docstring describing the expected behavior. AI suggests the complete implementation, which the developer reviews and refines.

Scaffolding REST Endpoints

Given an OpenAPI specification, AI tools can generate controller stubs, request/response DTOs, and basic validation logic—saving hours of repetitive coding.

Prototyping UI Components

Describing a component’s requirements in natural language yields initial React or Vue component code, including styling and event handlers.

Test Case Suggestions

Based on function signatures and existing tests, AI suggests additional test cases covering edge conditions the developer might overlook.

Refactoring Assistance

During an April 2025 sprint, AI identifies duplicate logic across multiple services and suggests extracting it into a shared utility, complete with migration steps.

All AI output requires review for correctness, performance, licensing compliance, and security before merging. User feedback on AI suggestions helps improve accuracy over time.

With automation and AI accelerating development, let’s see how Agile methodology shapes the coding phase.

Agile methodology has transformed the coding phase of the SDLC by introducing flexibility, collaboration, and a relentless focus on continuous improvement. In Agile, the coding phase is organized into short, time-boxed sprints—typically lasting one to four weeks—where development teams tackle a prioritized set of user stories or features. This approach enables teams to deliver working software incrementally, gather user feedback early, and adapt quickly to changing requirements, especially when combined with lean SDLC practices tailored for startups.

During each sprint, developers collaborate closely, write and refactor code, and perform frequent code reviews to maintain high software quality. Continuous integration and automated testing are integral, ensuring that new code is always production-ready and that bugs are caught early. Agile methodology encourages open communication, regular retrospectives, and iterative enhancements, empowering development teams to improve their processes and outcomes with every sprint. By embracing Agile in the coding phase, organizations can reduce risk, accelerate delivery, and consistently meet customer expectations.

With Agile practices in mind, let’s consider how to deliver scalable software.

Delivering scalable software is essential for organizations aiming to support growth and adapt to changing user demands. Achieving scalable software delivery requires careful attention to system architecture, infrastructure, and robust testing practices throughout the software development process.

A well-architected system lays the foundation for scalability, enabling applications to handle increased traffic and data volumes without sacrificing performance. Leveraging modern infrastructure solutions—such as cloud platforms, containerization, and orchestration tools—gives development teams the flexibility to scale resources up or down as needed. Comprehensive testing, including load and performance testing, ensures that the software remains reliable under varying conditions.

Incorporating DevOps practices like continuous integration and continuous deployment (CI/CD) further streamlines the development process, allowing teams to deliver updates rapidly and with confidence. By prioritizing scalability from the outset, development teams can build software that not only meets current requirements but is also prepared for future growth, ensuring a seamless experience for users and stakeholders alike.

With scalability addressed, let’s look at how the coding phase transitions to testing and deployment.

The coding phase doesn’t end when code compiles—it ends when code is integrated, tested, and ready for formal QA and release. The testing process depends on quality handover from development. Key components such as source code, documentation, test cases, and deployment scripts must be provided to the testing team to ensure a smooth transition.

The goal of the testing phase is to identify and fix bugs, ensuring the software operates as intended before being deployed to users.

Software development teams must provide:

Successful CI builds are promoted through environments:

Feature flags and configuration toggles enable teams to deploy code to production while selectively enabling functionality. This supports scalable software delivery where the final product can be released incrementally.

This approach aligns with customer expectations by enabling faster delivery while maintaining control over feature rollout and supporting customer feedback cycles.

With the handover complete, let’s examine common pitfalls and how to avoid them.

Many SDLC failures trace back to poor practices in the coding phase rather than pure technical limitations. Focusing on the quality of the actual code is crucial to avoid common pitfalls that can lead to costly issues later. Understanding common pitfalls helps teams avoid expensive rework.

In the prevention strategies subsection, it's important to note that proper modular coding allows for easier scalability and future feature additions.

In late 2024, a development team bypassed code review to meet a deadline, introducing a regression bug that affected payment processing. The fix required emergency deployment, customer communication, and three days of recovery effort—far exceeding the time a proper review would have taken.

The maintenance phase inherits whatever quality the coding phase produces. Software projects that cut corners during implementation pay multiples later in support costs, with maintenance potentially consuming 60% of total lifecycle budgets.

Following a structured approach to risk analysis during coding helps identify issues before they reach the testing deployment and maintenance phases.

With pitfalls addressed, let’s conclude with the importance of a strong coding phase in the SDLC.

The coding phase is where requirements and design finally become working software artifacts. It’s the pivotal development stage where planning meets reality, transforming business objectives into the software’s functionality that serves users. Ongoing maintenance is essential to ensure the software remains functional and continues to operate effectively after deployment.

Disciplined coding practices—supported by modern tooling, AI assistance, comprehensive testing, and rigorous reviews—reduce risk and accelerate the entire process. Teams that invest in quality during implementation spend less time fixing bugs in testing and maintenance.

Continuous maintenance is necessary to ensure software remains functional and meets evolving user needs after deployment. The maintenance phase in the Software Development Life Cycle (SDLC) is characterized by constant assistance and improvement, ensuring the software's best possible functioning and longevity. Ongoing support during the maintenance phase addresses issues, applies updates, and adds new features to the software. This phase also involves responding to user feedback, resolving unexpected issues, and upgrading the software based on evolving requirements. Maintenance tasks include frequent software updates, implementing patches, and fixing bugs to ensure software longevity. User support is a crucial component of the maintenance phase, offering help and guidance to users facing difficulties with the software. The maintenance phase is essential for safeguarding the longevity of any piece of software, similar to maintaining a house over time.

View coding not as an isolated activity but as an integrated, collaborative phase connected to planning, design, testing, deployment and maintenance, and operations. The right sdlc model for your organization balances structure with flexibility, enabling software development teams to deliver consistently.

Looking forward, the SDLC coding phase will continue evolving. AI-augmented development, shift-left security practices, and continuous delivery techniques will reshape how traditional software development approaches complex projects. Teams that embrace these changes while maintaining fundamental engineering discipline will build the high quality software that meets customer expectations and supports system performance at scale.

The key components of the SDLC—including planning, design, coding, testing, deployment, and maintenance—work together to deliver high-quality software. Each phase plays a vital role, and ongoing maintenance ensures the software remains functional, secure, and aligned with user needs throughout its lifecycle.

Start by evaluating your current coding practices against the checklists in this article. Choose one area—whether it’s improving code reviews, adding static analysis, or integrating AI tools—and implement it in your next sprint. Incremental improvements compound into significant gains across your software development lifecycle.

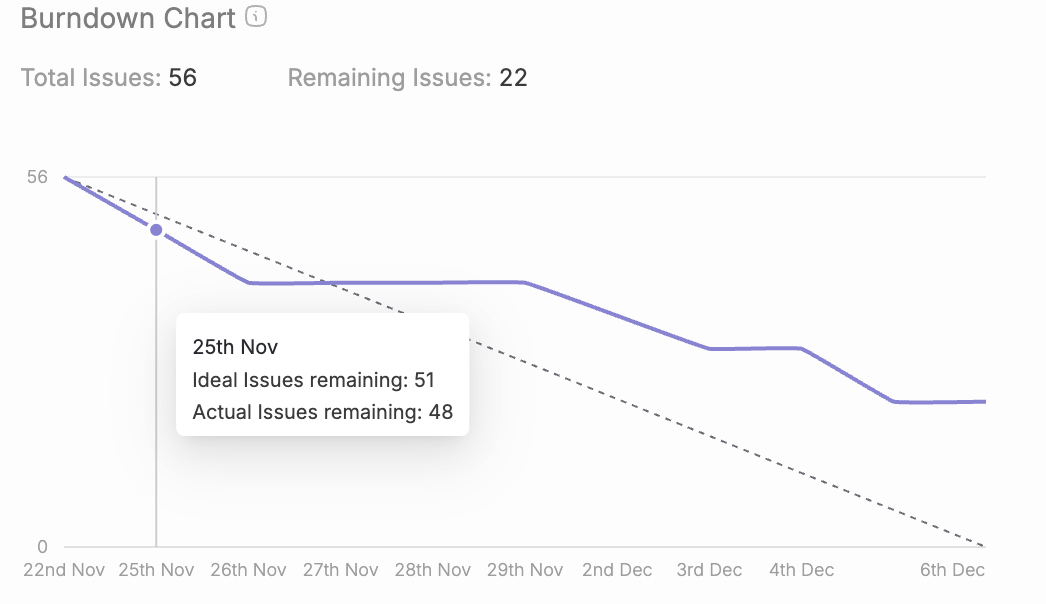

If you’ve searched for “burn ups,” chances are you’re either tracking a software project or diving into nuclear engineering literature. This guide explains Agile project management.

Another common Agile project tracking tool is the burn down chart, which is often compared to burn up charts. We'll introduce the basic principles of burn down charts and discuss how they differ from burn up charts later in this guide.

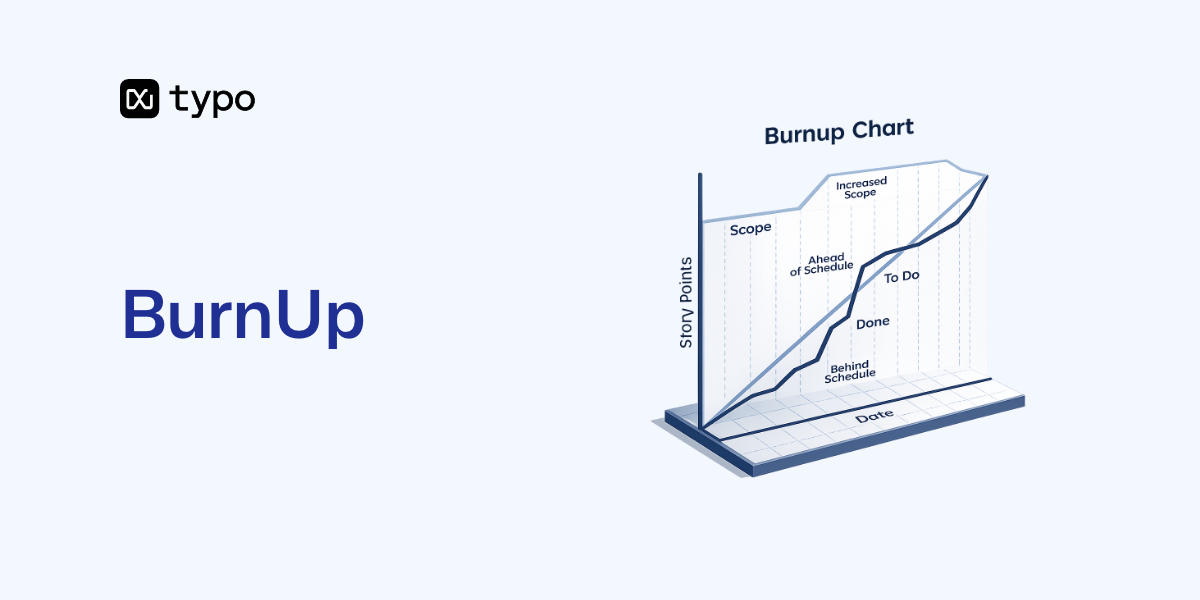

A burn up chart is a visual tool that tracks completed work against total scope over time. Scrum and Kanban teams use it to visualize how close they are to finishing a release, sprint, or project. Unlike a burndown chart that starts high and decreases, a burn up chart starts at zero and rises as the team delivers. A burn down chart visualizes the remaining work over time, starting with the total scope and decreasing as work is completed, and is especially useful for projects with fixed scope.

A typical Agile burn up chart displays two lines on the same graph:

Teams measure progress using various units depending on their workflow, and the choice between story points vs. hours for estimation affects how you interpret the chart:

The horizontal axis typically shows time in days, weeks, or sprints. For example, a product team might configure their x axis to display 10 two-week sprints spanning Q2 through Q4 2025.

Visual elements of an effective burn up chart:

Figure 1: A sample burn up chart for a 6-sprint mobile app project would show a scope line starting at 100 story points, rising to 120 in sprint 3, with the progress line climbing from 0 to meet it by sprint 6.

Burn up charts are favored in Agile environments because they make project progress, scope changes, and completion forecasts visible at a glance. When stakeholders ask “how much work is left?” or “are we going to hit the deadline?”, a burn up chart answers both questions without lengthy explanations.

Key benefits of using burn up charts:

Realistic usage scenarios:

Burn up vs. burndown: key distinction

For a deeper dive into a complete guide to burndown charts, you can explore how they complement burn up charts in Agile tracking.

Prefer burnups when your scope evolves, your team does discovery-heavy work, or you’re managing long-running product roadmaps. A simple burndown may suffice for fixed-scope, short-lived projects like one sprint or a small feature.

The process of creating a burn up chart works across spreadsheets (Excel, Google Sheets) and Agile tools like Jira, Azure DevOps, and ClickUp. These steps are tool-agnostic, so you can apply them anywhere.

Step-by-step process:

Example with actual numbers: Your team begins a release with 120 story points planned. By sprint 3, new regulatory requirements add 30 points, pushing total scope to 150. Your burn up chart shows the scope line jumping from 120 to 150 at the sprint 3 boundary. Meanwhile, your completed work line has reached 45 points. The visual immediately shows stakeholders why the remaining work increased—without making your team look slow.

Configuring a burn up report in Agile tools:

Visual design tips:

Your team should be able to set up a basic burn up chart in under an hour, whether using a spreadsheet template or a built-in tool report.

Reading a burn up chart means understanding what each line, gap, and slope tells you about delivery risk, progress velocity, and scope changes. Once you know the patterns, the chart becomes a powerful forecasting tool.

Understanding the axes:

Interpreting the gap: The space between the scope line and the completed work line at any date represents work remaining. For example:

If your team maintains velocity at 25 points per sprint, you can project completion in two more sprints, assuming you understand how to use Scrum velocity as a planning metric rather than a rigid performance target.

Common patterns and their meanings:

Walkthrough example: Consider a 10-week web redesign project with 150 story points in scope. By week 3, the team has completed only 20 points—well below the ideal pace line that projected 45. The burn up chart makes this gap obvious. After the team removes a critical impediment (switching a blocked vendor integration), velocity doubles. By week 8, completed work reaches 140 points, nearly catching the scope line.

When patterns indicate risk—like a widening gap heading into a November 2025 release—the chart supports practical decisions: renegotiating scope with stakeholders, adding resources, or adjusting the delivery date.

Both burnup charts and burndown charts track progress over time, but they show it from opposite perspectives. A burn up chart displays completed work rising toward scope. A burndown chart displays work remaining falling toward zero.

Key differences:

Concrete example:

When to choose each chart:

Some teams use both charts side by side in Jira or Azure DevOps. This can provide comprehensive views, but teams should agree on which chart serves as the “single source of truth” for status reports and stakeholder communication, while using iteration burndown charts for sprint-level insight.

Burn up charts work at the sprint level, but their real power emerges when applied to releases and multi-team portfolios spanning several quarters.

Release forecasting with projection lines:

Portfolio burn up charts:

Caveats for forecasting:

Advanced setups might integrate burn up charts with other metrics like cycle time, work-in-progress limits, or defect rates, or combine them with additional engineering progress tracking tools such as Kanban boards and dashboards. However, keep the chart itself simple and readable—additional complexity belongs in separate reports.

While burn up charts are invaluable in Agile project management, the term “burnup” also plays a critical role in nuclear engineering, which we’ll explore next.

Update frequency depends on your workflow. For sprints, updating at the end of each day during stand-ups provides early warning of issues. For releases spanning multiple sprints, updating at sprint boundaries often suffices. Kanban teams typically update daily since they don’t have sprint boundaries.

Absolutely. In Kanban, configure the horizontal axis as calendar days rather than discrete sprints. Plot cumulative completed work daily against your target scope. The cumulative flow diagram offers complementary insights, but a burn up chart still works for visualizing progress toward a goal.

Persistent scope growth signals either poor initial estimation, stakeholder pressure, or unclear project boundaries. Use the burn up chart as evidence in stakeholder conversations. Show how each scope increase pushes out the projected completion date, then negotiate trade-offs: add resources, extend timelines, or cut lower-priority features.

Track at both levels if possible. Sprint-level burn up charts help the team during daily stand-ups. Release-level charts inform product managers and stakeholders about overall trajectory. Most Agile tools support both views from the same underlying data.

If your completed work line is tracking parallel to or above an ideal pace line connecting your start point to the target end date, you’re on track. If the gap between your progress line and scope line is shrinking at your current velocity, you should meet the deadline.

For Agile teams:

Start by creating a burn up chart for your next sprint. Watch how making scope and progress visible transforms your team’s conversations—and your ability to deliver on time.

Scrum metrics are quantifiable data points that enable agile teams to measure team performance, track sprint effectiveness, and evaluate delivery quality through transparent, data-driven insights. These specific data points form the backbone of empirical process control within the scrum framework, allowing your development team to inspect and adapt their work systematically.

Direct answer: Scrum metrics are measurements like velocity, sprint burndown, and cycle time that help agile teams track progress, identify bottlenecks, and drive continuous improvement in their development process. These key metrics originated from Lean manufacturing principles and were adapted for iterative software development to address the unpredictability of knowledge work.

By the end of this guide, you will:

Scrum metrics are specific data points that scrum teams track and use to improve efficiency and effectiveness.

Scrum metrics are specific measurements within the scrum framework that track sprint performance, team capacity, and delivery effectiveness. Unlike traditional waterfall metrics focused on time and cost adherence, scrum metrics prioritize team-level empiricism—transparency, inspection, and adaptation—measuring sustainable pace and flow rather than individual productivity.

These agile metrics matter because they provide the visibility needed for cross functional teams to make informed decisions during scrum events like sprint planning, daily standups, and sprint reviews. When your agile team lacks clear measurements, improvements become guesswork rather than targeted action.

Key scrum metrics operate within fixed sprint timeboxes, typically two to four weeks. This cadence creates natural measurement opportunities during sprint planning, where teams measure capacity, and retrospectives, where teams analyze what the data reveals about their development process.

Sprint-based measurement creates a rhythm for tracking agile metrics. Each sprint boundary becomes a data collection point, allowing scrum teams to compare performance across iterations and identify trends that inform future sprints.

Scrum metrics measure collective team output rather than individual productivity. This distinction is critical—velocity is explicitly team-specific and not meant for cross-team comparisons. When organizations misuse metrics to compare agile practitioners across different teams, they distort estimates and erode trust.

Team performance indicators connect directly to sprint-based measurement cycles. Your team delivers work within sprints, and the metrics provide insights into how effectively that collective effort translates to completed user stories and sprint goals.

Metrics support the inspect-and-adapt principles central to agile frameworks. Rather than serving as performance judgment tools, well-implemented scrum metrics drive continuous improvement by revealing patterns and opportunities.

Tracking metrics over time helps identify areas where process changes could improve team effectiveness. A stable trend indicates predictability, increasing trends signal growing capability, while decreasing or erratic patterns flag estimation issues, impediments, or external factors requiring investigation.

Essential metrics for scrum teams fall into three categories based on their focus: sprint execution, quality assurance, and team health. Many agile teams make the mistake of tracking too many metrics simultaneously—focusing on the right combination based on your current challenges yields better outcomes than comprehensive but overwhelming dashboards.

Velocity measures the amount of work a team can complete during a single sprint. It quantifies team capacity by summing the story points of completed work items per sprint. If your team delivers 15, 22, and 18 story points across three sprints, your average velocity is approximately 18 points. This average guides sprint planning to prevent overcommitment and enables release forecasting.

Calculate velocity by tracking remaining story points at sprint end: only fully completed items count toward velocity. Teams typically average the last three to four sprints for forecasting reliability, as this smooths out natural variation.

Sprint Burndown Chart visualizes daily work completion against the sprint plan and helps track progress. Sprint burndown charts plot remaining work against time, creating a visual representation of the team’s progress toward sprint goals. The ideal trajectory runs from total commitment to zero as a straight line, while the actual line—updated daily—reveals real progress. Sprint burndown charts expose risks like flat lines indicating blockages, upward spikes showing scope creep, or steep drops signaling strong momentum.

Story completion ratio measures delivered user stories against committed ones. Completing eight of ten committed stories yields 80% completion. This metric reveals planning accuracy without story points granularity and proves particularly useful for early-stage teams refining their estimation practices.

Throughput is the number of work items completed per sprint, reflecting team output consistency.

Cycle Time measures the duration for a task to progress from "in progress" to "done." Lead Time is the total time from when a request is created until it is delivered. These flow metrics expose efficiency opportunities and help teams measure cycle time improvements over successive sprints.

Escaped defects measure how many bugs or defects were not caught during testing and were found by customers after the release. This indicates gaps in your quality assurance process and Definition of Done. Mature teams target trends below 5% of delivered stories. Defect removal efficiency calculates the percentage of bugs caught before release—aiming for 95% or higher signals a robust testing practice.

Technical Debt Index quantifies suboptimal code that requires future remediation. It balances speed by tracking time spent on debt repayment versus new features. Mature products typically allocate 10-20% of capacity to technical debt management, though this varies based on product age and market pressures.

Team satisfaction surveys and team happiness assessments capture the human factors that predict sustainable delivery. Low team morale correlates with increased turnover and declining productivity—making these leading indicators of future performance problems.

Sprint goal success rate tracks the percentage of sprints where the defined goal is fully achieved. High rates around 85-90% build stakeholder trust, while patterns below 70% highlight overcommitment, unclear acceptance criteria, or unrealistic goals. This outcome-oriented metric aligns with the 2020 Scrum Guide’s emphasis on goals over story completion.

Workload distribution analysis reveals whether work in progress spreads evenly across team members. Concentration of work creates bottlenecks and burnout risks that undermine the team’s success over time.

Customer satisfaction score and net promoter score validate that your team delivers genuine business value. As the ultimate outcome metric, customer satisfaction connects engineering efforts to the organizational mission.

Work in Progress (WIP) tracks the number of items being worked on simultaneously to identify bottlenecks.

Context matters when selecting which metrics to track. A newly-formed agile team benefits from different measurements than a mature team optimizing for flow. Your implementation approach should match your team’s experience level and the specific challenges you face managing complex projects.

Teams should begin formal metric tracking after establishing basic scrum practices—typically after three to four sprints of working together. Premature measurement creates noise without actionable signal.

Define measurement objectives aligned with sprint goals and team challenges—determine whether you’re solving for predictability, quality, or team efficiency.

Select three to five core metrics to avoid measurement overload; start with velocity plus sprint burndown, then add others as these stabilize.

Establish baseline measurements over two to three sprints before attempting to interpret trends or set improvement targets.

Integrate metric reviews into existing scrum ceremonies—sprint reviews for stakeholder-facing metrics, retrospectives for team-focused measurements.

Create action plans based on metric trends and outliers, focusing on one to two improvements per sprint.

Automate collection through development tool integrations to minimize manual tracking overhead.

Teams measure what matters to their current situation. If predictability is your challenge, prioritize sprint execution metrics. If defects keep escaping, focus on quality metrics. If turnover threatens team capacity, measure team health first.

Integrate metric collection with existing development tools like Jira, GitLab, or dedicated engineering intelligence platforms. Manual data entry creates friction that leads to incomplete tracking—automation ensures consistent measurement without burdening team members.

Cumulative flow diagrams visualize how many tasks move through workflow stages over time, exposing bottlenecks through widening bands and throughput through slopes. Modern tools generate these automatically from ticket status changes, providing flow insights without additional tracking effort.

Dashboard creation should follow the principle of surfacing decisions, not just data. An effective agile coach helps teams configure views that prompt action rather than passive observation.

Teams implementing scrum metrics consistently encounter several obstacles. Understanding these challenges in advance helps you navigate them effectively and maintain measurement practices that improve team effectiveness rather than undermine it.

When metrics connect to performance evaluations or bonuses, teams naturally optimize for the measurement rather than the underlying goal. Story points inflate, easy work gets prioritized, and the metrics lose their diagnostic value.

Solution: Focus on trends over absolute numbers and combine multiple complementary metrics to prevent single-metric optimization. Emphasize that metrics exist to help the team improve, not to judge individual contributors. Never compare velocity across different scrum teams—it’s explicitly not designed for this purpose.

Some organizations attempt to track every possible metric simultaneously, creating dashboard overload that prevents actionable interpretation. When everything seems important, nothing gets the attention it deserves.

Solution: Start with three core metrics, establish consistent measurement cadence, and add new metrics only when existing ones are stable and generating insights. Resist pressure to expand tracking until your current metrics drive visible improvements.

Incomplete ticket updates, inconsistent story point assignments, and irregular measurement timing corrupt your data and make trend analysis unreliable.

Solution: Automate data collection through development tool integrations wherever possible. Establish clear metric definitions with the entire development team during retrospectives, ensuring everyone understands what each measurement captures and how to contribute accurate data.

Some team members view metrics with suspicion, fearing surveillance or unfair evaluation. This resistance undermines adoption and can poison team dynamics.

Solution: Involve the team in metric selection from the start. Emphasize improvement over performance evaluation—make it clear that metrics identify pain points in processes, not problems with people. Share positive outcomes from metric-driven changes to demonstrate value and build trust.

Effective scrum metrics drive sprint predictability, quality delivery, and team satisfaction without creating measurement burden. The key insight across all agile methodologies is that metrics serve teams, not the reverse—they provide the transparency needed for informed decisions while respecting sustainable pace.

Research shows that approximately 70% of agile teams track velocity and burndown charts, but only 40% effectively leverage flow metrics like cumulative flow diagrams. High-performing teams achieve 90% sprint goal success rates through consistent, metric-informed empiricism.

Immediate actions to implement:

Related areas to explore include DORA metrics for broader delivery performance measurement, value stream management for end-to-end visibility across your software development lifecycle, and engineering intelligence platforms that automate tracking and surface insights through AI-assisted analysis. As teams mature, flow metrics and throughput measurements increasingly complement traditional velocity tracking.

Metric Calculation Quick Reference:

Scrum Ceremony Integration Checklist:

Recommended Tools by Team Size:

Typo's sprint analysis focuses on leveraging key scrum metrics to enhance team productivity and project outcomes. By systematically tracking sprint performance, Typo ensures that its agile team remains aligned with sprint goals and continuously improves their development process.

Typo emphasizes several essential scrum metrics during sprint analysis:

Typo integrates sprint metrics reviews into regular scrum ceremonies, such as sprint planning, daily standups, sprint reviews, and retrospectives. By combining quantitative data with team feedback, Typo identifies pain points and adapts workflows accordingly.

This data-driven approach supports transparency and fosters a culture of continuous improvement. Typo’s agile coach facilitates discussions around metrics to help the team focus on actionable insights rather than blame, promoting psychological safety and collaboration.

Typo leverages integrated tools to automate data collection from project management systems, reducing manual effort and improving data accuracy. Visualizations like burndown charts and cumulative flow diagrams provide real-time insights into sprint progress and flow stability.

Through disciplined sprint analysis and metric tracking, Typo has achieved improved predictability in delivery, higher product quality, and enhanced team morale. The focus on relevant scrum metrics enables Typo’s development team to make informed decisions, optimize workflows, and consistently deliver value aligned with customer satisfaction goals.

Accelerate metrics are the four key performance indicators that measure software delivery performance and operational excellence across engineering organizations. These research-backed DevOps metrics have become widely recognized as the gold standard for evaluating how effectively teams deliver software and respond to production challenges.

This guide covers DORA metrics implementation, measurement strategies, and practical applications for engineering teams seeking to improve their software delivery process. The target audience includes Engineering leaders, VPs of Engineering, Development Managers, and teams actively using Git, CI/CD pipelines, and SDLC tools who want to gain valuable insights into their development process efficiency.

Accelerate metrics are Deployment Frequency, Lead Time for Changes, Change Failure Rate, and Mean Time to Recovery—proven performance indicators that measure how effectively organizations deliver software while maintaining stability and quality.

By the end of this guide, you will understand:

Accelerate metrics are research-backed key performance indicators developed by the DevOps Research and Assessment (DORA) team to quantify software delivery capabilities. These four key performance indicators provide a balanced view of both velocity and stability, enabling teams to make data-driven decisions about their development process improvements.

The four key Accelerate metrics are deployment frequency, lead time for changes, change failure rate, and time to restore service.

Rather than measuring output like lines of code or commit counts, these metrics focus on outcomes that correlate directly with organizational performance and business value delivery.

The significance of Accelerate metrics stems from extensive research conducted across thousands of global organizations. The accelerate metrics originate from extensive research conducted by Dr. Nicole Forsgren, Jez Humble, and Gene Kim, spanning rigorous research involving over 39,000 professionals across thousands of companies worldwide. This work, published through annual State of DevOps reports and the influential 2018 book “Accelerate: The Science of Lean Software and DevOps,” established the statistical connection between software delivery performance and business outcomes.

Organizations that excel in these DevOps metrics are 2.5 times more likely to exceed profitability targets and demonstrate significantly higher market share growth. This research shifted the industry focus from anecdotal DevOps practices to data-driven measurement of what actually predicts high performing organizations.

Accelerate and DORA metrics are interchangeable terms referring to the same four key metrics for measuring delivery performance. Accelerate metrics are also referred to as DORA metrics, named after the DevOps Research and Assessment Group. The terms are often used synonymously because the DORA team’s research formed the empirical foundation for the Accelerate book’s conclusions.

These metrics fit within broader engineering intelligence initiatives focused on SDLC visibility and operational performance measurement. Understanding how they connect to developer experience frameworks like SPACE helps teams address both the strengths of quantitative measurement and the qualitative aspects of team productivity.

With this foundation established, let’s examine why these specific four metrics were chosen and how each measures a different aspect of the software development lifecycle.

Each accelerate metric captures a distinct dimension of software delivery performance. Two focus on velocity (how fast teams can deliver changes), while two measure stability (how reliable those changes are). Together, they prevent teams from optimizing speed at the expense of quality or vice versa.

Deployment frequency measures how often an organization successfully releases to production.

Deployment frequency measures how often an organization successfully releases code to production or makes changes available to end users. This metric directly reflects a team’s ability to deliver software incrementally and respond quickly to market changes.

High performing teams achieve frequent deployments—often multiple times per day—enabling rapid iteration based on customer feedback. Low performing teams may deploy only once every few months, limiting their responsiveness to user expectations and competitive pressures. High deployment frequency indicates mature DevOps practices including continuous integration, automated testing, and streamlined workflows that reduce friction in the release process.

Lead time for changes defines the time it takes from code committed to code in production.

Lead time for changes tracks the elapsed time from when a developer commits code to when that code runs successfully in production. This metric reveals the efficiency of your entire delivery pipeline, from development through testing to deployment.

Elite teams achieve lead times of less than one hour, reflecting highly automated processes and minimal manual handoffs. Low performing teams often experience lead times stretching to months due to bottlenecks like manual approvals, lengthy testing cycles, or siloed operations teams. Reducing lead time enables faster delivery of new features and bug fixes, directly impacting customer satisfaction.

Change failure rate measures the percentage of deployments that cause a failure in production.

Change failure rate measures the percentage of deployments that result in degraded service, service impairment, or require immediate remediation such as rollbacks or hotfixes. This stability metric indicates the quality and reliability of your deployment practices.

High performing organizations maintain failure rates between 0-15%, demonstrating mature practices like automated testing, canary releases, and feature flags. When failures occur at higher rates (46-60% for low performers), teams spend excessive time on failed deployment recovery time rather than delivering business value. This metric encourages practices that catch issues before they reach production.

Time to restore service measures how long it takes to recover from a failure or incident in production.

Mean time to recovery (MTTR), also called time to restore service, measures how quickly teams can restore service after a production incident or deployment failure. This metric acknowledges that failures will happen and focuses on resilience rather than prevention alone.

Elite teams restore service in less than one hour through robust observability, chaos engineering practices, and well-rehearsed incident response procedures. Low performing teams may take weeks to recover, significantly impacting customer satisfaction and revenue. MTTR reflects both technical capabilities and organizational readiness to respond when problems arise.

These four core metrics create a balanced scorecard that accelerate metrics provide for understanding both speed and stability. With this framework established, let’s explore practical approaches to implementing measurement in your organization.

Moving from understanding accelerate metrics to actually measuring and improving them requires thoughtful integration with your existing development tools and processes. The insights gained from tracking metrics only deliver value when connected to actionable improvement initiatives.

Establishing accurate baseline measurements is essential before pursuing improvement goals. Without reliable data, teams cannot make informed decisions about where to focus their continuous improvement efforts.

Integrating analytics tools with your SDLC platforms enables automated data collection that surfaces actionable insights without burdening development teams with administrative overhead.

These benchmarks, derived from DevOps research across thousands of organizations, serve as directional goals rather than absolute targets. Context matters—a heavily regulated environment may have legitimate reasons for longer lead times due to compliance requirements.

Use benchmarking to identify which metrics represent your biggest opportunities for improvement rather than trying to optimize all four simultaneously. Teams often find that improving one metric (like reducing change failure rate through better testing) naturally improves others (like reducing MTTR because issues are simpler to diagnose).

Organizations implementing accelerate metrics frequently encounter predictable obstacles. Understanding these challenges in advance helps teams establish sustainable measurement practices that drive continuous improvement rather than creating dysfunction.

Many organizations struggle with fragmented toolchains where deployment data, incident records, and code repositories exist in separate systems with no unified view.

Implement engineering intelligence platforms that automatically aggregate data from multiple SDLC tools and provide unified dashboards. These platforms eliminate manual tracking overhead and ensure consistent measurement across various aspects of the delivery pipeline.

When metrics are tied to performance evaluation, teams may artificially inflate deployment frequency by splitting changes into tiny increments or misclassifying incidents to improve MTTR numbers.

Foster psychological safety and focus on team-level improvements rather than individual performance to prevent metric manipulation. Position metrics as diagnostic tools for identifying improvement opportunities, not as evaluation criteria. Involve adopting a culture where metrics reveal problems to solve rather than performance to judge.

Different teams often interpret “deployment,” “failure,” and “incident” differently, making organization-wide comparisons meaningless and preventing accurate benchmarking against DevOps report standards.

Establish organization-wide standards for deployment, failure, and incident definitions with clear documentation and training. Create shared runbooks that define when an event qualifies for each category, ensuring the metrics provide valuable insights that are comparable across the organization.

Some teams resist measurement programs, fearing they will be used punitively or that the overhead will slow down delivery of high quality software.

Emphasize metrics as tools for continuous improvement rather than performance evaluation and involve teams in goal-setting processes. When teams participate in defining targets and improvement approaches, they take ownership of outcomes. Demonstrate early wins by connecting metric improvements to reduced technical debt and improved developer experience.

With these challenges addressed proactively, teams can build sustainable metrics programs that deliver long-term value for DevOps performance optimization.

Accelerate metrics are proven indicators of software delivery excellence that predict organizational performance and business outcomes. By measuring deployment frequency, lead time for changes, change failure rate, and mean time to recovery, engineering leaders gain the visibility needed to drive meaningful improvements in their software development processes.

High performing teams using these metrics achieve 25% faster delivery while maintaining or improving software quality—demonstrating that speed and stability are complementary rather than competing goals.

Immediate next steps to implement accelerate metrics:

Related topics worth exploring include the SPACE framework for understanding developer productivity beyond delivery metrics, cycle time analysis for deeper pipeline optimization, and measuring the impact of AI code review tools on software quality and delivery performance.

When exploring SDLC models, it's important to understand that each model represents a distinct approach to software development, offering unique structures and levels of flexibility tailored to different project requirements. This guide is intended for project managers, software developers, and stakeholders involved in software development projects. Understanding SDLC models is crucial for these audiences because selecting the right model can directly impact project success, efficiency, risk management, and the ability to meet business goals.

This page will compare and explain the most common SDLC models, such as Waterfall, Agile, Spiral, V-Model, and others, helping you identify which SDLC model best fits your team's needs. Whether you're a project manager, developer, or stakeholder, you'll gain clarity on the strengths and limitations of each approach, enabling more informed decisions throughout your software development process.

Software development is the process of designing, creating, testing, and maintaining software applications or systems.

The Software Development Life Cycle (SDLC) concept refers to a structured process that guides the planning, creation, and maintenance of a software product. SDLC methodology outlines the distinct phases and structured approach used to manage and control a software development project, ensuring that project goals, scope, and requirements are clearly defined and met. Software development models, such as Agile or Waterfall provide structured frameworks within the SDLC for managing each stage of the software development project, from initiation to deployment and maintenance.

The software development life cycle (SDLC) is built around a series of well-defined phases, each designed to guide teams toward delivering high quality software in a structured and predictable manner. Understanding these phases is essential for effective project management and for ensuring that software development efforts align with business goals and user needs.

By following these SDLC phases, organizations can manage software development projects more effectively, reduce risks, and deliver solutions that meet both business and technical expectations.

With an understanding of the key phases, let's explore the main SDLC models and how they structure these phases.

SDLC models can be broadly categorized into two main types: sequential and iterative. Sequential models, such as the Waterfall model, follow a linear progression through defined phases. Iterative models, like Agile and Spiral, allow for repeated cycles of development and refinement. It is important to note that there are different SDLC models, each with its own strengths and weaknesses, making them suitable for various project needs.

Among the popular SDLC models are Waterfall, Agile, Spiral, and the V-model. These are just a few examples, as various SDLC models exist to address different project requirements, timelines, budgets, and team expertise. The V-model, also known as a validation model, is a variation of the waterfall methodology that emphasizes verification and validation by associating each development stage with a corresponding testing phase throughout the SDLC.

Additionally, hybrid and risk-driven variants combine elements from multiple models to better suit complex or evolving project demands. Selecting the appropriate SDLC model—or even a hybrid approach—depends on the unique needs and constraints of each software development project.

Now, let's take a closer look at the most popular SDLC models and their characteristics.

Here’s a quick summary of the most popular SDLC models, their definitions, and their typical project scope fits:

Glossary Notes:

For more details on less common SDLC models, see our SDLC Glossary (reference link or appendix).

Selecting the right SDLC model is crucial for project success. The choice should be based on factors like project complexity, risk level (high risk vs. low risk projects), and the need for flexibility or documentation. Using the appropriate model helps ensure quality, timely delivery, budget adherence, and stakeholder satisfaction.

Transitioning from model overviews, let's examine each model in more detail.

The Waterfall model is a classic example of a linear SDLC model. In the Waterfall model, each phase—requirements, design, implementation, testing, deployment, and maintenance—must be completed before the next phase begins. This sequential approach makes the process predictable and easy to manage, especially when requirements are well understood from the start.

The Waterfall model is ideal for projects with the following characteristics:

However, the Waterfall model has some notable drawbacks:

With a clear understanding of the Waterfall model, let's move on to iterative and adaptive approaches.

The Iterative model is based on the concept of iterative development, where the software is built and improved through repeated cycles. Each iteration involves planning, design, implementation, and testing, allowing teams to refine the product incrementally.

A typical iteration lasts between two to six weeks, but the length can be adjusted based on project needs. Shorter iterations enable more frequent assessment of development progress and allow teams to respond quickly to changes.

It is crucial to gather and incorporate customer feedback at the end of each iteration. This feedback helps shape project requirements, prioritize tasks, and ensures the final product aligns with user needs. By continuously monitoring development progress and integrating customer feedback, teams can make necessary adjustments and deliver a product that meets stakeholder expectations.

Next, let's look at Agile, a popular iterative model.

Agile SDLC Model

The Agile SDLC model is designed to accommodate change and the need for flexibility in modern software projects. It is particularly suitable for managing software projects where requirements may evolve over time. Agile emphasizes collaboration among team members and stakeholders, ensuring continuous feedback and alignment throughout the development process.

Agile organizes work into short, iterative cycles called sprints (see glossary note above), allowing teams to deliver functional software quickly and adapt to changing needs. Regular stakeholder involvement is recommended, with reviews and feedback sessions at the end of each sprint to ensure the project stays on track and meets user expectations.

Popular Agile frameworks include Scrum, Kanban, and Extreme Programming (XP). Extreme Programming is known for its focus on iteration, pair programming, and test-driven development, making it highly responsive to change and effective within the broader Agile ecosystem.

Having covered Agile, let's explore models that emphasize risk management and validation.

The Spiral model emphasizes risk assessment in every development cycle, making risk analysis a key activity at each stage. It instructs teams to map risk checkpoints and integrate risk management strategies throughout the process. This approach is particularly suitable for projects with high risk and significant project complexity, as it allows for continuous evaluation and adjustment. The model also recommends the use of prototypes (see glossary note above) in each spiral to address uncertainties and validate requirements early.

Now, let's examine the V-Model, which focuses on verification and validation.

The V-Model, also known as the V-shaped model, is a type of verification and validation model. It extends the traditional waterfall approach by pairing each development phase with a corresponding testing activity, creating a structured and hierarchical process. In this model, testing phases run in parallel with development stages, allowing for rigorous and early detection of errors. Formal test plans are recommended early in the process, ensuring that each phase is thoroughly verified and validated. This makes the V-shaped model especially suitable for regulated projects or those requiring high-quality, error-free software.

Next, let's review models designed for rapid delivery and modular development.

Rapid Application Development (RAD) is an SDLC model that prioritizes rapid prototyping actions, enabling teams to quickly build and refine working versions of the software. RAD is particularly effective for complex projects that require frequent adjustments and rapid delivery, as it allows for iterative development and continuous user involvement. Teams are advised to establish strong user-feedback loops to ensure the evolving product meets requirements. However, caution should be exercised regarding scalability risks, as RAD may not be suitable for very large-scale systems without careful planning.

Incremental Model is an SDLC approach that delivers software in modular increments, each building on the previous. This model is ideal for projects with evolving requirements and a need for early partial releases. It recommends priority-based increment planning, allowing teams to focus on delivering the most valuable features first and adapt to changes as the project progresses.

Big Bang Model is an SDLC model characterized by minimal planning, where development starts with little or no requirements definition. It is typically used for small, low risk projects with minimal planning. The approach is simple but carries high project risk and is not recommended for complex or large projects.

DevOps is an SDLC approach that promotes CI/CD pipeline integration, with continuous delivery being a key practice. Continuous Integration/Continuous Delivery (CI/CD) refers to the ongoing, automated process of integrating code changes and deploying software updates. DevOps also requires a cultural and organizational shift, fostering collaboration and shared responsibility between development and operations teams. This shift impacts the entire organizational mindset and structure, encouraging teams to communicate openly and adopt new practices that improve efficiency and automation.

Teams are encouraged to suggest cross-team collaboration practices and include monitoring and feedback automation to ensure rapid response to issues and continuous improvement.

With a comprehensive understanding of the main SDLC models, let's compare their strengths and tradeoffs.

When comparing popular SDLC models, it's important to understand the key phases that structure each development process. Each model organizes the software lifecycle into distinct stages, such as requirements gathering, design, development, testing, deployment, and maintenance. The way these key phases are sequenced and emphasized can significantly impact project flexibility, cost, time-to-market, and the overall quality of the final product.

Below is a comparison table outlining the tradeoffs between major SDLC models:

Note: Some less common models may not be included in this table. For a full glossary, see SDLC Glossary. For insights on common challenges and how to address them, see understanding the hurdles in sprint reviews.

Understanding these tradeoffs will help you align your project needs with the most suitable SDLC model.

Clearly defining your project scope is essential to ensure that the chosen SDLC model aligns with customer expectations and addresses stakeholder needs. The following steps can help guide your selection process:

By grouping these considerations, you can make a more informed decision about which SDLC model best fits your project.

With your project scope and constraints in mind, let's move on to the practical steps for selecting an SDLC model.

Selecting the right SDLC model involves a structured approach. Use the following checklist to guide your decision:

Following these steps will help ensure a transparent and justifiable model selection process.

Once you've selected a model, the right tools and techniques can further support your SDLC implementation.

To support each phase of the software development life cycle, teams rely on a variety of tools and techniques that streamline the software development process and enhance overall quality. These tools are important because they help automate tasks, improve collaboration, and ensure consistency and traceability throughout each SDLC phase—especially for readers unfamiliar with software development tooling.

Project Management Tools: Solutions like Jira, Trello, and Asana help teams plan, track progress, and manage tasks throughout the development process. These tools facilitate collaboration, ensure accountability, and provide visibility into project status.

Requirements Management Tools: Tools such as Confluence and IBM DOORS assist in capturing, organizing, and tracking project requirements. They help ensure that all stakeholder needs are documented and addressed during the development life cycle sdlc.

Design and Modeling Tools: Software like Lucidchart, Figma, and Enterprise Architect enable teams to create visual representations of system architecture, workflows, and user interfaces. These tools support clear communication and help prevent design misunderstandings.

Development and Version Control Tools: Integrated development environments (IDEs) such as Visual Studio Code and Eclipse, along with version control systems like Git, streamline coding, code review, and collaboration among software developers.

Testing and Quality Assurance Tools: Automated testing frameworks (e.g., Selenium, JUnit) and continuous integration platforms (e.g., Jenkins, Travis CI) help teams conduct thorough testing, catch defects early, and maintain high code quality throughout the software development process.

Deployment and Monitoring Tools: Solutions like Docker, Kubernetes, and platform engineering tools such as New Relic or Datadog support automated deployment, scalability, and real-time performance monitoring, ensuring smooth transitions from development to production.

Collaboration and Communication Tools: Platforms like Slack, Microsoft Teams, and Zoom foster effective communication among distributed development and operations teams, supporting agile methodologies and continuous improvement.

By leveraging these SDLC tools and techniques, organizations can optimize each stage of the development process, improve collaboration, and deliver high quality software that meets user and business requirements.

With the right tools in place, let's look at best practices for implementing your chosen SDLC model.

By structuring your implementation approach, you can maximize the benefits of your chosen SDLC model.

Next, let's consider risk, compliance, and maintenance factors that can impact your project's long-term success.

Addressing these considerations will help safeguard your project against unforeseen challenges.

Now, let's see how these models work in practice through real-world case studies.

These case studies provide practical insights into the strengths and limitations of each SDLC model.

To wrap up, let's summarize key recommendations and next steps for adopting the right SDLC model.

By following these recommendations, you can confidently select and implement the SDLC model that best fits your project's unique needs.

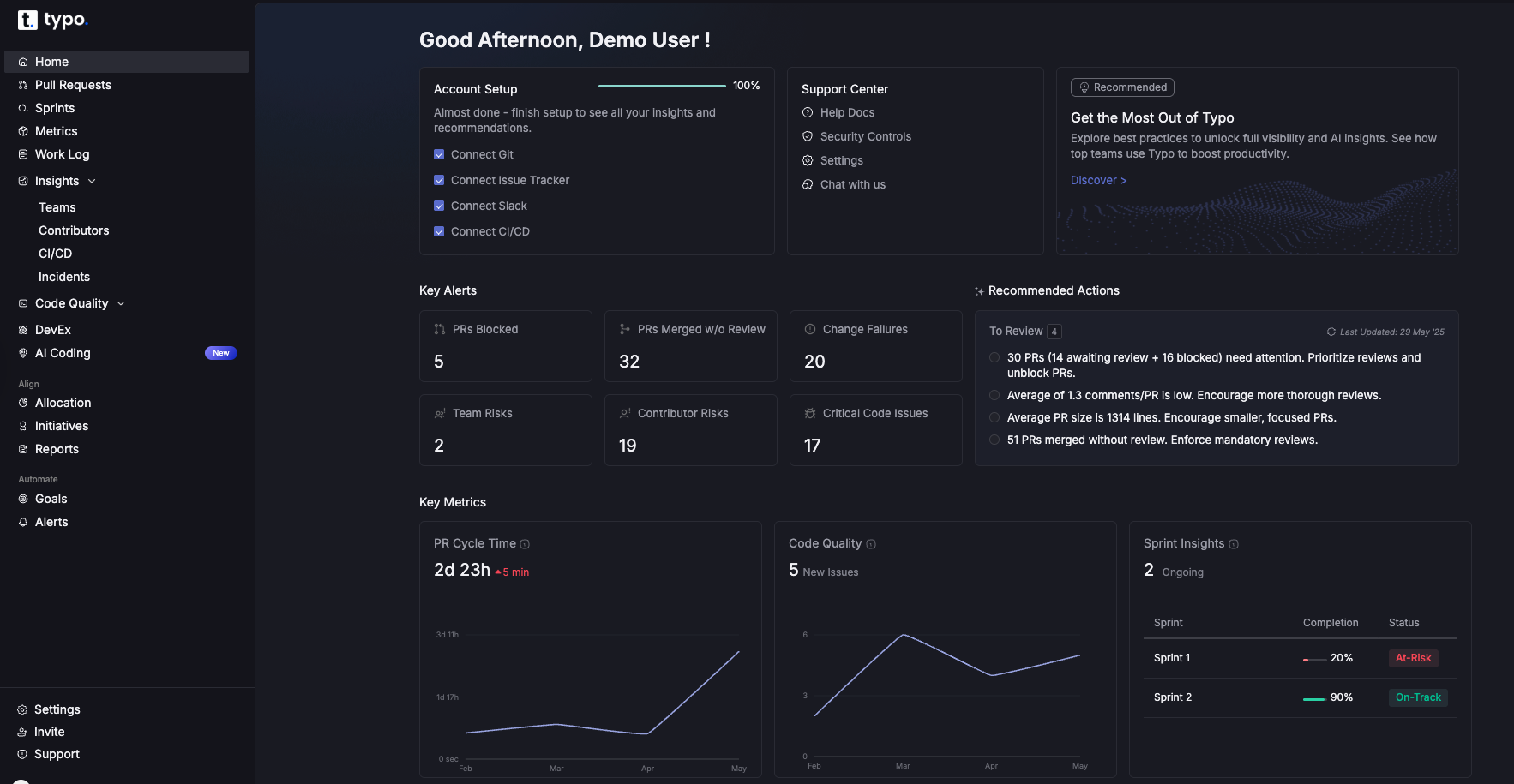

Modern software teams face a paradox: they have more data than ever about their development process, yet visibility into the actual flow of work—from an idea in a backlog to code running in production—remains frustratingly fragmented. Value stream management tools exist to solve this problem.

Value stream management (VSM) originated in lean manufacturing, where it helped factories visualize and optimize the flow of materials. In software delivery, the concept has evolved dramatically. Today, value stream management tools are platforms that connect data across planning, coding, review, CI/CD, and operations to optimize flow from idea to production. They aggregate signals from disparate systems—Jira, GitHub, GitLab, Jenkins, and incident management platforms—into a unified view that reveals where work gets stuck, how long each stage takes, and what’s actually reaching customers.

Unlike simple dashboards that display metrics in isolation, value stream management solutions provide end to end visibility across the entire software delivery lifecycle. They surface flow metrics, identify bottlenecks, and deliver actionable insights that engineering leaders can use to make data driven decision making a reality rather than an aspiration. Typo is an AI-powered engineering intelligence platform that functions as a value stream management tool for teams using GitHub, GitLab, Jira, and CI/CD systems—combining SDLC visibility, AI-based code reviews, and developer experience insights in a single platform.

Why does this matter now? Several forces have converged to make value stream management VSM essential for engineering organizations:

Key takeaways:

The most mature software organizations have shifted their focus from “shipping features” to “delivering measurable customer value.” This distinction matters. A team can deploy code twenty times a day, but if those changes don’t improve customer satisfaction, reduce churn, or drive revenue, the velocity is meaningless.

Value stream management tools bridge this gap by linking engineering work—issues, pull requests, deployments—to business outcomes like activation rates, NPS scores, and ARR impact. Through integrations with project management systems and tagging conventions, stream management platforms can categorize work by initiative, customer segment, or strategic objective. This visibility transforms abstract OKRs into trackable delivery progress.

With Typo, engineering leaders can align initiatives with clear outcomes. For example, a platform team might commit to reducing incident-driven work by 30% over two quarters. Typo tracks the flow of incident-related tickets versus roadmap features, showing whether the team is actually shifting its time toward value creation rather than firefighting.

Centralizing efforts across the entire process:

The real power emerges when teams use VSM tools to prioritize customer-impacting work over low-value tasks. When analytics reveal that 40% of engineering capacity goes to maintenance work that doesn’t affect customer experience, leaders can make informed decisions about where to invest.

Example: A mid-market SaaS company tracked their value streams using a stream management process tied to customer activation. By measuring the cycle time of features tagged “onboarding improvement,” they discovered that faster value delivery—reducing average time from PR merge to production from 4 days to 12 hours—correlated with a 15% improvement in 30-day activation rates. The visibility made the connection between engineering metrics and business outcomes concrete.

How to align work with customer value:

A value stream dashboard presents a single-screen view mapping work from backlog to production, complete with status indicators and key metrics at each stage. Think of it as a real time data feed showing exactly where work sits right now—and where it’s getting stuck.

The most effective flow metrics dashboards show metrics across the entire development process: cycle time (how long work takes from start to finish), pickup time (how long items wait before someone starts), review time, deployment frequency, change failure rate, and work-in-progress across stages. These aren’t vanity metrics; they’re the vital signs of your delivery process.

Typo’s dashboards aggregate data from Jira (or similar planning tools), Git platforms like GitHub and GitLab, and CI/CD systems to reveal bottlenecks in real time. When a pull request has been sitting in review for three days, it shows up. When a service hasn’t deployed in two weeks despite active development, that anomaly surfaces.

Drill-down capabilities matter enormously. A VP of Engineering needs the organizational view: are we improving quarter over quarter? A team lead needs to see their specific repositories. An individual contributor wants to know which of their PRs need attention. Modern stream management software supports all these perspectives, enabling teams to move from org-level views to specific pull requests that are blocking delivery.

Comparison use cases like benchmarking squads or product areas are valuable, but a warning: using metrics to blame individuals destroys trust and undermines the entire value stream management process. Focus on systems, not people.

Essential widgets for a modern VSM dashboard:

Typo surfaces these value stream metrics automatically and flags anomalies—like sudden spikes in PR review times after introducing a new process or approval requirement. This enables teams to catch process improvements before they plateau.

DORA (DevOps Research and Assessment) established four key metrics that have become the industry standard for measuring software delivery performance: deployment frequency, lead time for changes, mean time to restore, and change failure rate. These metrics emerged from years of research correlating specific practices with organizational performance.

Stream management solutions automatically collect DORA metrics without requiring manual spreadsheets or data entry. By connecting to Git repositories, CI/CD pipelines, and incident management tools, they generate accurate measurements based on actual events—commits merged, deployments executed, incidents opened and closed.

Typo’s approach to DORA includes out-of-the-box dashboards showing all four metrics with historical trends spanning months and quarters. Teams can see not just their current state but their trajectory. Are deployments becoming more frequent while failure rates stay stable? That’s a sign of genuine improvement efforts paying off.